#294: Adapting an Analytics Team to an AI World

AI is moving fast. But so is life. AI is widely recognized as a must-adopt technology, but how and where are data workers expected to find the time for that?! Organizations are struggling to find effective ways to productively drive healthy adoption of AI: What is it they expect their workers to do with AI? Is it purely an efficiency driver, or should they expect other avenues of value creation to be pursued? What guardrails need to be in place? What incentive structures are (and are not) effective when it comes encouraging team members to take the AI plunge? One tactic that is definitely effective is to have leaders who are excited, engaged, and transparent as they get their hands dirty. And, boy, did the algorithm deliver one of those to us in the form of John Lovett, VP of Analytics at SEER Interactive, for this discussion!

Links to Resources Mentioned in the Show

- (Book) The *NEW* Big Book of KPIs: (Key Performance Indicators) by John Lovett

- Wil Reynolds

- AI Operating Manual: A Step-by-Step Guide to Teaching AI Systems How You Actually Think

- Twyman’s Law

- (Markdown file) Twyman’s Law Data Quality Module For LLM Analytics Integration & MCP Servers

- (Blog) Olympics analysis w/ AI by SEER (not published at time of recording, so we intended to track it down before this went live; if you’re reading this, Tim’s system failed and that did not happen!)

- (Blog) Live GEO Olympics Winter 2026 Results

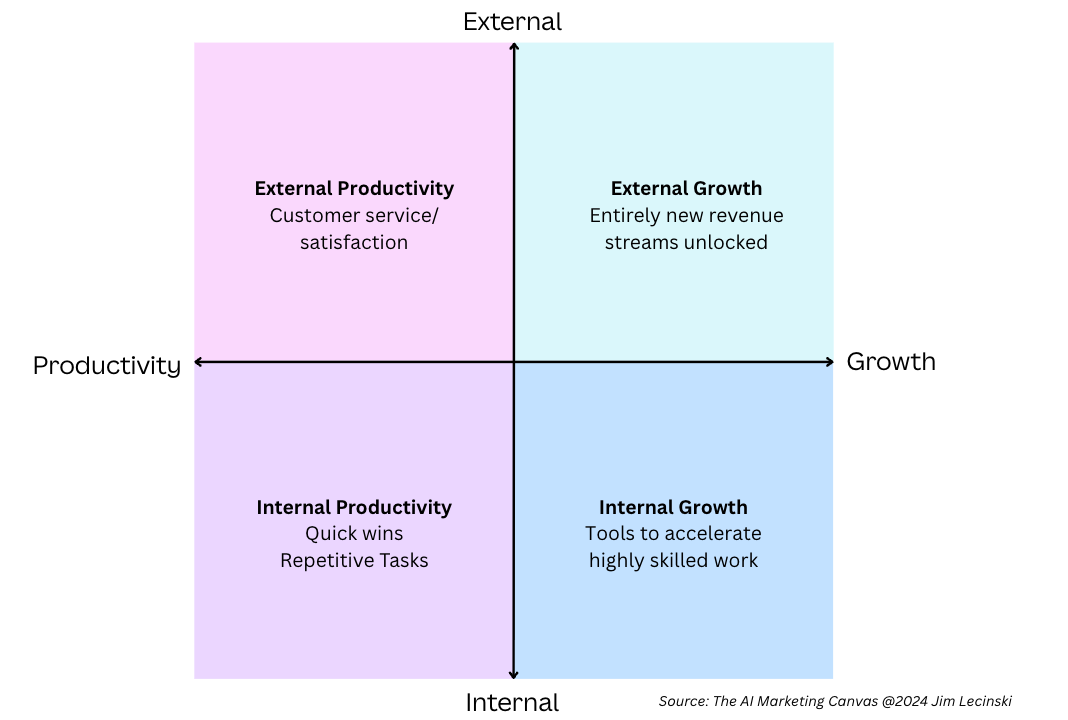

- (Book) The AI Marketing Canvas, Second Edition: A Five-Step AI Plan for Marketers by Rajkumar Venkatsen and Jim Lecinski

- The diagram that Moe referenced from the above book (also called out two episodes back!):

- (Podcast) The Artificial Intelligence Show – Paul Ritzer and Mike Kaput

- (Conference) MAICON: The Marketing Artificial Intelligence Conference – Oct. 13-15, 2026 in Cleveland, OH

- LinkedIn GEO Community

- (Substack) From Data to Product by Eric Weber

- (Book) Code Name Hélène: A Novel by Ariel Lawhon

- Data Kids Visualization Contest for Children

- (Conference) Marketing Analytics Summit – April 28-29, 2026 in Santa Barbara, CA

- Go to analyticshour.io/listener to submit a question for us to (potentially) answer when we record at Marketing Analytics Summit!

Photo by Maximalfocus on Unsplash

Episode Transcript

00:00:05.76 [Announcer]: Welcome to the Analytics Power Hour. Analytics topics covered conversationally and sometimes with explicit language.

00:00:15.87 [Michael Helbling]: Hi everybody, welcome. It’s the Analytics Power Hour. This is episode 294. Yeah, probably right after the old RTO policies got rolled out, you probably got another executive communication about going AI first or whatever the hell that means. And let’s be honest, I think we’re all making pretty heavy use of AI now in some capacity. But what does that even mean exactly? And specifically in our world of data? I mean, should I just be uploading all my data to Claude and letting it come up with my analysis? Or is it in planning where you are constantly having to tell chat GPT not to jump the gun and start writing SQL when you’re just exploring some concepts or ideas? And I don’t know. So welcome to the AI Analytics Power Hour, I guess, this time. We’re going to talk about it, not just using it yourself, but how to think about rolling AI out across your team or your organization. As always, I’m joined by my co-hosts, Julie Hoyer. Welcome.

00:01:16.73 [Julie Hoyer]: I’m back.

00:01:17.39 [Michael Helbling]: Actually, welcome back. Glad to be here. Oh my gosh, yeah, of course. It’s like you’ve never been here. It’s been a while. It has been a while. And Moee Kiss, how you going? I’m going pretty good. Outstanding. And I’m Michael Hellblink, and I’m excited for our returning guest. John Lovett is the VP of Analytics at SEER Interactive. He previously held leadership positions at Further and Web Analytics to Mystified as well as many other companies. He’s the author of at least a couple of books, the most recent one being The New Big Book of KPIs, Key Performance Indicators. And today he is our guest. Welcome back to the show, John. Thank you so much. It’s great to be here. Awesome. Well, it’s great to have you back. Finally, it’s been a long time. So it’s a long time coming. But I think what drove us to this was specifically how it seems like you and all the team at Seer are really diving headfirst into using AI in significant ways across your organization and across the teams to do your work, to bring new ideas to life. Just talk a little bit about what it’s like inside your four walls and how AI is impacting your work.

00:02:27.94 [John Lovett]: Yeah, yeah. Well, I’ll start by saying, so I’ve been at SEER now for a little over three and a half years. And the last 18 to 24 months of that, I’ve just been immersed in it. And I’m not like talking as an AI enthusiast. I leave my analytics division, I hold a P&L, and basically I’m making hard bets. And as the kids say, I’ve got some receipts to show for it. So I do want to say, though, that At our company, I’m obviously going to talk a lot about my experiences and how I rolled it out to my team. But the first thing I really want to say is that getting your hands dirty with AI isn’t optional anymore. You said this in your preference, Michael. But doing it without accountability is really how you lose credibility. Every story I’m going to tell today is about building infrastructure that makes AI trustworthy. And not just fast and not just do more, but this is a little trite, but I developed this when I first got to SEER. The tagline for my division is we build trust in data and we empower people to use it, to use their data. And that hasn’t changed with AI. AIs come along, but that’s really fundamental is trust in data and really being able to use it. So that’s kind of the first thing that I’ll say about that. And if you indulge me and let me ramble a little more, just talking about SEER as a whole, like I couldn’t, well, what I’ve been able to accomplish, starts at the top and shout out to Will Reynolds, the man, the myth, the legend. He’s the CEO. In fact, he doesn’t like to call himself CEO of Sear, but he founded Sear and he basically said, guys, listen, we are all in on AI and everybody here needs to get on board or. You don’t need to, but there’s the door. And made it such that we had a big pivot. I want to go back to December 2025. It was a mandate for every single person in our company. I think we’re about 175. We’re closer to 200 people now. Had to take an AI training course and get certified in AI. So it was like mandatory requisite. Everybody gets trained. And then we rolled around to summer of 2025 and Will said, listen, everyone at this organization, from associate all the way up to executive, senior VP has agency to be able to take license to develop with AI, build the prototypes, show them to your clients as prototypes, get feedback, and let’s get them into production if people like them. So the company as a whole basically said, we’re all in on this. We’re going to give you the tools. We’re going to give you some training and let you loose on this and really make it a part of our culture at the company and make it such that it was a part of everybody’s job. I’ll pause there. I’ve got more on that, but I’ll pause there for a minute.

00:05:36.80 [Julie Hoyer]: Even with the leadership mandates, that is pretty big to hear such big pivots and saying they wanted it company-wide. That’s awesome to hear because it sounds like it gives you and your team a lot of opportunities and the invitation to go try things out with AI, see what works, get ahead of some of these trends or figure out what it could be best used for. But I am curious, even smaller, before the leadership mandates or right afterwards, what was your first thought around it, John? Or what was your first even small step? Because you were running a whole team. How did you then as a leader of an entire delivery team of analysts, go about that.

00:06:22.67 [John Lovett]: Yeah. You know, honestly, it was kind of like staring at a blank piece of paper like, what do I do with this? I was just like everybody else. And I think my first experiences, which I do encourage people to try, are just use it for your personal life. Like I would literally open my refrigerator door, take a picture with chat GPT and say, what can I make for dinner? And it would look at it and say like, oh, you’ve got pasta sauce there. I see some tomatoes and I see a bell pepper. Like here’s a recipe for you. And then I would just start using it and talking to it. My family and I took a trip to Ireland last Thanksgiving, back in 2000. 24. And basically, I had to build our itinerary. And so we’re driving around Ireland. And of course, I had to put the chat to PT Irish accent on my on the talker. And so my wife called my girlfriend. It was like, that was like, where should we go today? What restaurant should we go to in Galway? And it was like, it was giving me recommendations. And they were great. It told me where to stay. The funny things that started happening when I would talk to it and it tell the things You know, I said like, hey, I’ve got three teenage boys. Here’s the things we like to do. This is what we want to try to do on our own. It was like a month later and I’m in the kitchen and I’m asking it something probably what to cook because I rely on that a lot. It thinks I thought I liked to cook and I say, I only ask you because I hate to cook. I just need the ideas. And it said to me, how are the boys doing? What would they like for dinner? And that for me was like, Like, and of course, like, this was my… Oh, see that?

00:08:00.48 [Julie Hoyer]: I feel like that would scare the shit out of me.

00:08:02.62 [John Lovett]: It totally scared me because I was like, what? And I almost dropped the phone because I was like, how do you even know that? And then the voice said back, well, you told me that you, I know that you went to Ireland with your boys and you talk about them a lot. And it brought that up in conversation. And that for me was like… like blew my mind in terms of what the possibilities were. And that was all just like my personal life before I really even started getting into it with work.

00:08:28.23 [Moe Kiss]: Can I ask a little bit? I’ve had a similar experience where there’s been a lot of support leadership down in terms of everyone at the company has been given access to every single tool that they could possibly want, enterprise grade. I do personally think that is an absolute game changer. I’m not going to tell you which tool to use, you can use any tool. But talk to me about the team experience, because I do feel there are those that are like, yeah, I’m going to roll my sleeves up. I’m going to get my hands dirty, that sort of stuff. And there are folks that are really scared. And they have that, it’s going to take my job. How did you navigate that team dynamic of the folks that are super keen versus the ones that are very apprehensive?

00:09:15.83 [John Lovett]: Yeah. Yeah. associated with that. There were people on my team that were like, let me add it, I’m all in. Others that are like, I don’t think so. I’m skeptical. One of the things that we did as an organization that I think helped open the door a lot, and this was early last year. So actually, many of you have even been early 2025. We, as a company, every division looked at every single deliverable that we had. What do we regularly produce for clients? What are our workflows? How do we work? And then built this huge list of, if we do all these things, where can we start AI that would help us to do these things faster, more efficiently, better? And we came up with a list at that time of 15 different workflows, deliverables, processes that we did on a regular basis. Then we said, okay, we’re going to prioritize this subset in Horizon 1. We call them, we have horizons of work. Horizon 1 is going to be this first build. We had a series of them in Horizon 1, then we go to Horizon 2, and Horizon 3 is bigger thinking, stacking them all on top of each other. But that really gave us the opportunity to think about the day-to-day work that we do, and how AI can be a part of that. I reiterate it at every team meeting, at every dead meeting. I’m going to hold that for you guys, I don’t know if you’ll see it, but I keep a sticky note. You probably can’t read this, but on my monitor that says, how can AI help me do this? And I genuinely think about that. It’s right here on my monitor. I have to look at it every minute because I encourage my team, like, you’re going to do something. Think about if AI can help. And there’s plenty of things that AI cannot do for you, but there’s a lot that it can do. So that was sort of a door opener for people to say, oh, I do this every day. One quick example I’ll give you is like, we’re an agency, right? And despite me trying to squash this as much as I can, we live and die by the billable hour. So we have to track time cards, right? And I’ve got team members that do it religiously and team members that just don’t. And they’re not part of their workflow, not part of their habit. We built an agent that said like, hey, connect. In our case, it’s a Claude instance to my calendar, to my rake, which is our project management. We’ve got connections to all of our platforms, but I can say, hey, look at my calendar and see everything that’s on there and populate my timecard for today or this week. Just that alone, I spent maybe less than an hour on that every day, but now I’m like, boom, I got it and I can do it. That same tool that I built to do that, Now, every morning I get in and I say, what’s my morning briefing? What is the most important thing I have to do this day? And it goes to my inbox. It goes to my Slack. It goes to all these different tools that I use every day. And it can say like, oh, you’ve got a podcast tonight with the with the greatest podcasters on the planet, you need a prep for that. And so it will tell me kind of the most important things to do. And that for me has been like such an eye-opener to say, like, you know, I would sit at my desk on Moenday, it’s like, oh, crap, what do I have to do this week? And I would write it out with a pencil on a piece of paper. And now I can just ask my AI partners, like, how do I, you know, what’s important to me? And that’s been a big change as well.

00:12:40.11 [Julie Hoyer]: Did you? Okay. This is a little nitty gritty, but I’m curious. Some people still love like, I still love to write like a to-do list. Obviously that’s not AI friendly by any means, unless I put it somewhere digitally. Um, you do. Okay. So I’m, I’m curious, like, do you then to help with that workflow of utilizing AI to surface things to you? Are you actually like snapping a picture of your physical to-do lists? Are you retyping them? Are you dropping slacks to yourself so that your AI will read it? Like,

00:13:09.07 [John Lovett]: A lot of times, and maybe this is me, old guy, old school, I take notes when I’m on a call with a client and they’re just riffing and talking about stuff and I’m either trying to capture requirements or figure out what’s important to them. I want to be in that moment. And for me, even though Whenever I can, I have a inscriber that’s recording the call so I can have the whole transcript and we can talk about that later. But that’s critical for me going back to, but I’m still taking those notes because when I go to write a scope of work or proposal or just update a report for a client or whatever I’m trying to do. That to me is like, okay, my brain interpreted this, I wrote it down, I know I need to get this done. I generally don’t take pictures of that and put them into Slack. A lot of times I will send myself Slack messages for reminders, but more often than not, those are links and different things that I want to come back to. But I do rely on head and paper for my thinking process to help me do things. And I still haven’t gotten away from the satisfaction of checking something off the list. I give that to myself. That’s so good.

00:14:21.81 [Tim Wilson]: Michael, quick question. How many times have you solved this same analytics problem this month?

00:14:29.56 [Michael Helbling]: Oh, probably enough times that I’m considering invoicing myself. Step one, fix GA4 source medium. Step two, lose the will to live.

00:14:39.21 [Tim Wilson]: Cool, so let’s stop living like that. Prism by Ask Why lets you save your best workflows as skills, portable expertise you can reuse across datasets and tools.

00:14:50.95 [Michael Helbling]: Okay, so like normalize UTMs, dedubleeds, merge Facebook and Google spend, maybe rename 37 versions of newsletter into one civilized channel.

00:15:01.69 [Tim Wilson]: Exactly. You build it once, then you run it again, instead of recreating it like it’s Groundhog Day, but with more spreadsheets and less Bill Murray. I mean, I like Bill Murray, but I do like fewer tabs. Plus, you know, there was Andy McDowell as well, but really plus jam, jam memory. It remembers context across sessions like your org’s definition of active user, which table is the source of truth, and that one cursed date range where tracking alas broke.

00:15:31.61 [Michael Helbling]: So I don’t have to start every meeting with before we begin.

00:15:35.52 [Tim Wilson]: Here’s the lore of our metrics Yeah, or or we explain that revenue means net of refunds here not whatever looks best on the slide Okay, well my dashboard has now been personally attacked Well, that’s good. My mission, my mission is complete. But skills plus memory means prism gets smarter about your world over time, your processes, your definitions, your shortcuts.

00:16:03.31 [Michael Helbling]: So it’s like an assistant that actually remembers my preferences, like I’m not getting from most of my streaming apps.

00:16:10.86 [Tim Wilson]: Exactly. So if you want early access, you can go to ask.y and join the waitlist. Speaking of waitlist, use code APH when you sign up and you will be bumped right to the top of that list.

00:16:22.93 [Michael Helbling]: OK, good deal.

00:16:23.93 [Tim Wilson]: I’m already on the website. Well, we’ve been doing this ad spot for a while. I hope you, Michael, have already gone to the website and signed up. But for anyone else, that’s Ask the letter “y” dot A I and use code APH. Yeah, I’m putting myself in the place of the user.

00:16:40.47 [Michael Helbling]: It’s called Empathy Tim. Oh, well, I don’t understand that. And I’m already over here saving time and my sanity using these skills. Yeah. It’s too good. I take my notes online, but, but same thing where I’ve got the transcript plus the notes. And sometimes I can push them together into the prompt and use both. But yeah.

00:17:01.92 [Julie Hoyer]: Okay. Small tangent. Well, I’m just curious. So John, you have your three boys. Um, and I have a few wonderful people in my life that are, you know, their teenage years getting ready to go to college. And I fell into a very interesting conversation. And so I’m curious if like what you tell your boys, like going into college, maybe they’re already in college, out of college, but like in the AI times, I was talking to someone and they were telling me that like they aren’t great at taking notes. And I kind of panicked for them thinking, you’re about to go to college. Like you have to get really good at taking notes. And they said, yeah, but there’s AI. But as we just talked about, like there still is this analyst skill of hearing certain things from a stakeholder or still having your own like mental filter right of like what you think is important or you really want to reiterate on or you want to build a story later so to be able to jot it down and it is a skill I think to actively listen. you know, take your notes, whether you’re typing or writing. And I suddenly got a little worried and I didn’t want to like harp on them saying like, well, you really need to like learn how to do it. But it had me thinking. What are your thoughts?

00:18:13.43 [Moe Kiss]: I still take notes as well, even though there’s like a transcribe function. For me, I wouldn’t say it’s necessarily, sometimes it is like, what is the key points that I’m taking away that I really want to follow up, but I actually think it’s how I listen. Like for me, how I absorb the information, if I’m not taking notes, I will, my brain will probably go off on five different things. So I wonder if it’s like more a style thing than a like AI, not AI thing.

00:18:42.26 [Michael Helbling]: I have noticed in meetings, I will summarize and repeat in the meeting sometimes so that the AI picks up on it better. And that’s a change I’ve noticed just for the transcript. I’ll be like, OK, so to summarize, we’re probably going to make sure we do this, this, and this. And then I know that the AI then will come through the meeting transcript and be like, oh, I’ll pull that out as it to do. So even my style of conducting the meeting is shifting a little bit behind it because I know that then I don’t have to write that down. I get the AI will surface that as my to-do. But yeah, it’s crazy how we’re adapting.

00:19:14.79 [John Lovett]: We joke about that. I do that too where we’re like, hey, transcribe or remember this piece and we kind of joke. I did literally just take out a pen and paper because I don’t want to forget the questions here. So Julie, starting with the question, I definitely worry about the future for kids. My oldest is about to turn 21, which is a frightening thing in and of itself. He’s off at college in North Carolina. was a very, he helped me may listen to this because such a proud dad, he changed his major. He was a finance major. He changed his major and I said, which change to? And he’s like, dad, I entered the business analytics program in the business school. And I said, What? Shut the front door. And I said, you do know that’s what I do, right? Because I never got a notice. I mean, he’s like, yeah, dad, I know that’s what you do. So, like, such a proud dad moment. But he’s never been, I actually wrote a blog post about this. He’s had dyslexia. He’s had learning challenges. He switched high schools because he wasn’t getting the support that he needed. To your point, His handwriting, even as an adult, was horrific. He just didn’t read like anybody else. He didn’t do things like everybody else. When he did math, he did it all in his head. He didn’t write out the problems. And so he just thinks differently. And so he has been a very early adopter of AI. And for good or for bad, he’s also as a high school student and now a college student figured out how do I use tools like chat tpt and what have you and then not have my teachers think that I wrote it with AI. So he’s got the anti-AI tools to figure that out, which honestly, like I’m like, buddy, I’m going to pay for that subscription. I’m going to pay for your chat tpt subscriptions or whatever he needs because I want my kids, when they get out of college, to have this as part of their skill set. My middle son is a junior right now in high school, and we’re looking at colleges. We went to Syracuse a couple of weekends ago, and those are my questions. I want to know from universities, how are you guys going to teach AI? Because the university that says to me, oh, it’s off limits, they can’t use it, I’m going to be like, you know what, you’re not going to prepare McTin for the workforce. that might not be the way I want to go. And I just think it’s a matter of, like, we took notes, we listen and think with our brains and our hands and record things. My kids, like, they’re on their phones. They’re, you know, they don’t actually know how to talk on a phone. They text and I don’t know if you guys have younger kids, but like, when you call a kid on the phone these days, they’re like, they don’t even say hello. They don’t even say what. It’s weird. They know how to.

00:22:00.98 [Moe Kiss]: No, but they know it. I mean, my three-year-old knows how to like pinch and zoom and swipe and you’re like, what the?

00:22:07.63 [John Lovett]: It’s wild. So I just think about learning style. I’m seeing with my own children shifts and I wanna, and I tell them like, you may not plagiarize, don’t just take it and copy and paste it. You have to put your brain into this. It’s gonna give you an output, but the output it gives you is generic stop. And until you put your voice into it and teach it and put your brain into it, that’s when it becomes a partnership, not just a, dictation machine or an answer engine, it’s really when you start to leverage the value of it. And there’s little tricks that I do, we can talk about later about like, like I teach AI my voice. I said like, I uploaded my books, I uploaded my blog posts. I said, I uploaded like email examples. I said, this is how I write, this is how I talk. And I want you when you’re writing on my behalf to mirror this, to use this, to add this to your knowledge so that when you’re generating something, For me, it sounds like me, and it’s legitimate like me. And all of my agents and tools, no M-dashes, I sign off cheers, so my email messages, I have all these little quirky things that I wanna say. I’m like, no jargon, don’t use buzzwords. I put things that I am like, no pie charts, stuff like that. I’ll put those into my instructions. And in fact, if anybody wants, this. I can give this as a resource, but I built, we were first to choose at our company. I haven’t made the choice yet, but it’s like, do you want to go with chat GPT or Claude? And I was like, well, if I give up one, how do I take all that teaching and learning that I taught it and bring it to the other. And so I developed a guide to be able to take out of the model and say, what is my personality? What do you know about me? How do I talk? How do I think? How do I act? And then give me those instructions that I can upload to my next agent so that it teaches it what I am like. And I found that to be like a transferable thing that I could say like, oh, if I suddenly lose access to one of these tools, how do I not lose all that history with what I’ve told it, darling?

00:24:20.10 [Moe Kiss]: Okay, John, you’re brilliant. And yes, we want all the resources, absolutely, because I’m literally doing that at the moment. Like no comment on politics at the moment, but yes, migrating from one tool to another. But okay, what you’re talking about is like fundamentally leading from example, putting in the time and effort to do it well. Back to your team. I am sure there are people that are dabbling and just producing utter shit. And as someone on the receiving end of reading lots of shit, it’s like that exploration period. I guess I just like I want to better understand is like you have gotten to the value point. I’m I think probably quicker than most. How do you how do you help your team get to that value point more quickly? Because The feedback is always, I’m busy, I don’t have time, and I’m the first to say all of those things. How did you create the time for both yourself and then your team to get to value faster?

00:25:17.97 [John Lovett]: Yeah, yeah. So time is, you still have to do your day job. So for me, I do end up working more. I try to tell my team not to do that. But sometimes it happens. But putting that aside for a second, One of the things I did early on last year, I’m a huge believer in conversational analytics. I think it’s coming. I think it’s coming for us all. And so I built a conversational analytics, which is how do you get an MCP to communicate with whatever LLM you choose, chat, GPT, quad, whatever you want. I started with GA4 because that’s what we had most access to, and I expanded it to BigQuery. And I said, everybody on the team has to make these connections. Use the MCP, follow the instructions, set it up so that you have the ability to talk to your data with these tools. And so, some begrudgingly, as we started, some jumped right into it, some were slower to act. I think it was not even a week, it was the first few days of doing this exercise. One of the analysts on my team sent out on our company-wide Slack, oh my gosh, our blog post just went viral. We had a huge spike in traffic. It’s amazing. This blog has never seen this much traffic. Will, our CEO, he’s got all the agents and the MCP connected as well. He’s a big runner, right? He’s a marathoner. He talks to it when he’s running through his headphones and using Claude, as he’s running, and he’s like, hey, so-and-so just posted this post, so we had this big viral spike, and he started asking questions. He’s like, I’m just curious, where did this traffic come from? And the agent responded, and it’s talking to him, oh, it looks like 99% is from China. And then he goes deeper and he goes, oh, really? Like, what kind of traffic is this? Did they bounce right away? What did they engage? And they were like, no, all the visits were sub one second or whatever it was. Come to find out it was a bot. You know, so a bot was hammering our site. My analyst team was like, hey, we made this great discovery. Look at me. I got this new conversational analytics thing to work. And that was a like screech hit the brakes, like needle off the record moment for me. I was like, wait a minute, I got to put some controls on this. So it was that same week, I had seen, actually, I think Tim Wilson reposted, oh, and I’m gonna forget his name, Twyman’s Law. I’ll come back, we’ll get this in the show notes, who gave me the reference. I wrote a lengthy post about it. But Twyman’s law, for those who don’t know, is essentially, if any number looks too good to be true, it probably is. And Twyman never really published this. It was like word of mouth, and it got around. It has become this marketing staple. And so I was like, hey, that would really work for my conversational agents. Why don’t I build that in to say, if you’re seeing this huge spike in traffic and it’s anomalous and it doesn’t match any of the other patterns and the data doesn’t match, question it and dig in. And that was really a groundbreaking moment for me to say these things a lot of you. You know, they’re gonna tell you, if anybody’s used chat GPT, I definitely call that the yes man in my arsenal or my toolkit, if you will, because it’s always like, John, you look great today. That shirt looks awesome on you. You cooked the best dinner ever. Like it just, it always gives me props and like, yes. And that’s what he was doing. It was like, you found an amazing insight. Look at this. And then you put something like the guardrails on it, like Twyman’s Law, to be able to say, you can’t just throw out a number like that. You need to verify it. And since then, I’ve actually adapted it to This isn’t too technical, but all of these models that we use, or most of them, are all probabilistic. You ask GPT question, Claude, Gemini, whomever you want, and it’s going to give you probably the answer. It’s doing word by word, and what’s the next logical word, and how does it go? If you ask it, what’s two plus two, they say, I’ve seen this enough, that’s probably four. But when you’re asking it to do analysis and the metrics and dimensions and all these different things, it sometimes with a thousand percent confidence tells you, you had this massive traffic spike, you got a viral sensation on your hands, and it thinks it’s true. And so I figured out a way to be able to take those probabilistic models and built into my instructions, deterministic instructions. So I say, never give me a number. Every number that you generate, I want to see the SQL query, I want to see the math behind it, and I want to know the logic. I want to know what’s missing from the data, and I want to know what you can’t show me reliably. And that has also helped me to provide those guardrails. So I started with Twyman’s Law, but I’ve evolved it to have this deterministic layer to say, like, don’t just give me a number. I want you to perform the calculation. And I actually have SQL built into my instructions that says, like, how do you do this? And how do you get at it? And that for me is really up the reliability of the answers that I get, because it will give you garbage and junk and mislead you out of the gate unless you put those filters on it.

00:30:33.63 [Moe Kiss]: So what’s your perspective then on, you’ve applied that to your own instances and Will is a phenomenal leader who gets the data side, right? But there are lots of stakeholders who don’t, who won’t build that. So is your thinking then that you need to find a way to apply those guardrails for everyone in your company? Or how do you scale that?

00:30:57.05 [John Lovett]: Yeah, so great question. Remember, I mentioned that we’ve all had agency to build our own agents and experiment and do things. I am the vibe coding master. In fact, I was up till 2 AM last night just vibe coding because I was having so much fun. And so I just build these prototypes, and I build them for me, and I build them, and I play with them, and I see what works. I iterate on them. We just had our first release day. So release day was, we had, I believe it was, 11 agents that got released to the company. So these are somebody’s vibe coded that went to our engineering team, they stress test them, they productionalized them, they made them ready for the whole company to use. And that is where I get things like Twyman’s Law, the data guardrails into production for everybody. As a company, the engineering team has built out, we call it the CRMCP. The CRMCP has connections to our data sets. It’s got connections to all of our sales transcripts. It’s got connections to every one of Will’s presentations. It’s got connections to every one of our town halls. You can get so much information from this one. CR MCP, that that is how we productionalize things. We bring them as an organization to once they’ve been viped and tested and tried, we productionalize them by having it go through a relatively specific process. But everybody’s encouraged to bring the ideas kind of back to the original question is like, for my team, I built an AI innovation lab. And we’ve got weekly meetings, they’re optional. I usually have them on Fridays. And I’ve got a core team that prioritizes ideas and puts things on our roadmap for delivery. But everybody is allowed to bring ideas. Everybody brings challenges. And this is something I’m playing with, or this is how I’m trying to work through this issue. And so that just opens up the the door to possibilities and everybody trying things and everybody getting excited about what they can possibly do with AI that can help them. So that’s been a big lift for us as well.

00:33:01.01 [Julie Hoyer]: I’m curious because I’ve seen it so many times. I’m sure all of us have a new technology. It’s very exciting to use it. John, I’m curious how did you, because it sounds like you guys have gotten to the point where you’re very focused on problem solving, but like what you’re saying with your Friday meetings, right? Of like bringing ideas or problems, things they’re trying to work on. Like how do you keep your team or in the beginning, how did you get your team to really think of using AI as a tool to solve specific problems? Like is that a fight you’re still fighting? Do you feel like you guys are pretty mature in that thinking? I’m curious how you got there, if you are.

00:33:34.26 [John Lovett]: Yeah. So it is definitely a tough one. I’ll give you another real-world example. So it was three weeks before the Olympics were about to start. I’m a huge Olympics fan. And I think it was a Saturday morning, whatever, scrolling on my phone. And I said, hey, what if I ran the world’s largest geotest to test a bunch of prompts and see what kind of data I can get back about the Olympics, that LLM model. So this is you typing in a prompt to chat GPT, perplexity, like all the different models and seeing what responses come back. And I developed five hypotheses. So like narrative persistence is one, like how long does a narrative stick before it changes? Temporal velocity, how soon when an event happens or let’s say somebody is awarded a medal, do the AIs pick up on that and see, I looked at social proof, does social presence make a difference with which how frequently athletes showed up in LLM responses. So I had these five hypotheses. And I basically, I wrote a blog post about it. I was so excited at all the state of collecting. And I put out a general thing to the team. I was like, hey guys, I’m the only person working on this project right now. Everybody’s invited. Come on, jump in. Like, give me some help. And it was crickets. Like everybody. Oh, I was going to be like, no one responded, right? Because like, nobody, everybody’s like, I got my day job. I got all this stuff to do. Like, and, and again, like this is sort of that, uh, I don’t want to call it apathy, but like, uh, I’m afraid of it. I don’t know how to do this. I don’t know what you’re asking me to do. I’ve never done that before. How do I do this? And so the Olympics started, I had three ways, pre-Olympics, during Olympics and post-Olympics. And obviously we’re in that post-Olympics phase right now. During the Olympics phase, Nobody responded to me. It had been three weeks, almost a month. And I said, you know what? I can’t have this. I went to four of my people on my team and I said, I need you to be a leader here. I need you to, here’s the hypothesis, it’s framed. All you have to do, I built an agent to be able to analyze the data. It had all my guardrails in it. It had the hypotheses. It had what we were testing and it was showing data and I was doing preliminary results. I said, I need you guys to log in here and either prove or disprove these hypotheses with the data. And every single one of the four people I asked was like, I will do that. And again, I had to think about how I was going to write it. I used my agents to help me, like what’s a persuasive message that people with not a lot of time are going to want to do this and adapt to this. And, you know, so I was thoughtful about it. Do you think part of it was like the just a bystander effect like you kind of ask everyone and everyone’s like and soon as you went to people individually prop could very well be in and again that’s part of you know I’ve I’ve got a big team out see everybody every day and so I’m reaching out to people that I see on zoom maybe once a week or just at big meetings and me reaching out and saying like, hey, I need your help on this. This is an important project for us. This is going to help us know what to test with regard to GEO. It’s going to tell us about how the models think and how we can use that to build tests and experiments and really understand what’s going on. Everybody’s on board. We’ve got to give all hands tomorrow and we’re not even done the analysis. I’m the fifth person. There’s five hypotheses and We’re all going to do five minutes on what are we learning so far? What have we found? Like, how is this going? And it was that little nudge, that personal touch, that reaching out directly that helped me get my team on board. Because everybody, you know, when you do ask that bigger question, there’s a lot of like, that’s, he’s not asking me. I can kind of shirk off into the shadows. and see who else will step up first. So that was a big one for me. And I’m super excited about the results. Like I’ve already found so many fascinating things just through this research already. So that’s something that maybe by the time this actually probably will be by the time this is published, you can check out the GEO results.

00:37:44.14 [Michael Helbling]: Yeah, I like that. I find that for certain people, they just jump in and start doing their own AI process. And other people need AI defined into the process for them. And I think that’s sort of where I’ve seen, like I was talking to somebody recently, and they were like, I need my team to be doing this. And I was like, well, why don’t we set up a process where you take them through these steps? And one of the steps is you go do this thing with AI. And now you’re putting an AI enablement step into the process, just making it part of the standard process for them. And it was like, oh, yeah, that’ll totally work. And so some people just need you to give them delay out the how do I do these steps even though like a lot of us because we’re the way we are with analytics and in curiosity and asking why we’ll go into the AI and be like I want to set up a series this I want to set up a process or so we’ll just start with the AI and work through. and learn as we go. And then other people need like, I need you to tell me exactly how to do it, but AI can be part of it as part of that. So it’s very interesting because I’m watching adoption like this too. And I’m sort of like, yeah, not everybody is just going to jump in with both feet. So how do I get them active? And that was one of the ways that we, we kind of thought through about, about that was just sort of like, okay, well, one of the steps is you go to the AI and you do this, this, this and this with it. And that’s how you get through the process.

00:39:09.28 [John Lovett]: I love that example, Michael. One of the things that sort of runs in parallel with that, when I use AI to do something, if I like, let’s say it’s an analysis, I’m just like, hey, I’m trying to understand why does this brand get a bunch of citations, but not a bunch of mentions? And I do an analysis, I get a good output that I like, I usually go back and forth with the AI, and I’m a, Maybe I’m a beneficiary of having lots of tools, but I’ll take a Claude output and throw it over to ChatGPT and I’ll say, what do you think of this? And it says like, oh, that’s pretty good, but you forgot these three things. And then I’ll read it and edit it and I’ll put it back in Claude. And I was like, hey, how about this? And I was like, wow, that’s really smart. Those are good ads. So I play them off one another. But the one thing that I always do, and this is maybe a limitation of tools in 2026s, I use Ninja Cat, I use Claude, I use Gemini, I use basically use everything, but I am so worried about losing my work. And so it’s like that whole thing like I didn’t even save your file and your computer stopped and you lost it. I tell it. So I hit context windows. We have like you hit your limit for the company this month. You can’t log in for 24 hours. As soon as I think that’s going to happen or as soon as I get an output that I like, I tell the agent, write the instructions so that I can replicate this and give those instructions to another agent and teach another agent how to do what we just did. That for me, I can then pick up and say, hey guys, I show my team, I built this analysis, here’s something that we do every day. Here’s an agent that will help you do this. you can ask it any question and it’s gonna guide you down this path of using the right information, asking you questions to be able to get to the right outputs that are gonna produce something that’s relatively consistent. And for me, that’s been a huge unlock because those people who are like, I don’t know what to do with this thing. I don’t know how to build an agent. They can certainly use an agent and they can certainly use something like that to help guide them through an analysis or really any type of workflow.

00:41:12.45 [Julie Hoyer]: How have your analysts felt? Because Moe, you and I have talked about this on previous episodes of the shift in work of looking at a blank page and you’re creating your own thing, your own work, you’re on thinking your own analysis, right? Compared to if you are using AI to give you something to react to, it’s a completely different process in your brain. So I’m curious, John, along those lines, What has been the reaction or the feedback from your team? If they’re using AI in these tools for an analysis, how have they liked, disliked, you know, pros and cons of using AI to start an analysis and they’re like checking the work. They are confirming what AI has found, things like that.

00:42:00.86 [John Lovett]: Yeah, I think it definitely happens. I would say that the first reaction of the team is, this is just slowing me down. I have to ask it all the questions, do all the things I would do the analysis for, and I have to go make sure it didn’t hallucinate or give me bogus answers. it does slow you down at first. And it does make you go a little bit slower to say, I’m going to question that. I’m going to be curious about it. The whole part of AI, is it going to do the job of the analyst? It can help to surface insights and get things. But if you don’t have the curiosity, if you don’t have a spidey sense for that number ain’t right, it will just give you junk. I would say that initially it does take longer to do things. And this might take us on a tangent, but what we’re doing for that today is My team still builds dashboards, right? We still have reports, and that is our source of truth. We get our data, we pump it through our tools, we use tools like Funnel, and we pump it to BigQuery, and we can do queries out of there. I find out the specific regex for the queries, and I replicate that in my tools in my agents so that when I’m doing an analysis, I can look at my dashboard and say, okay, LLM visibility rate is 42%, share voice is whatever. What does it say in the agent? And if they match, then I feel good about that. And I’m like, okay, this matches my source of truth. If it’s way off, I’m like, okay, why was it off? What was going on here? What was happening? We’ve tried to build in those things where we can say, let’s have a source of truth. It used to be for me, I would ask it a question and then I would go to GA4 or Adobe Analytics and like, all right, let me dig up this number. Honestly, like I’m so far out of those tools from the day-to-day perspective, I’d be like clunking around and be like, oh, how do I find, I don’t even know what explorer to build to get through this number versus having the conversational agent when I could just ask it things. My team was great at that. They would do an analysis and then they would verify a GA or whatever platform so we could see those two things. But having that source of truth and having that dashboard, I’m still gonna, we will still rely on those. AI isn’t going to kill the dashboard just yet, but I think it’s an important resource to have for that validity, for the data quality, for the ability to make sure it’s not, you know, you’re not getting AI slop.

00:44:37.28 [Julie Hoyer]: Have you guys then turned the corner where you’re seeing efficiency gains from your new process of using AI like in your day to day work. And then second part of the question, I’m going to hit you with two before I forget my second part of my question. On our International Women’s Day episode, Moe, you guys were talking about how maybe efficiency gains is like not the only outcome or great part that could come from AI. But right now, that’s what people are most focused on. So I’m curious, John, have you guys found the efficiency gains? And are efficiency gains the only positive that have come out of you guys integrating AI into what you do, or have you found other great things coming from?

00:45:20.29 [John Lovett]: Definitely, the efficiency gains are a big thing. For us, it’s been a lot about, hey, we’ve got this process that we do. It’s part of the workflow. Now when we do it, we can repeat it across client to client to client. That’s agency life, right? It’s like we’re repeating these things. We’ve got similar analysis, different data sets. That has definitely helped us move faster through these things. The structured prompts, the way that we build methodologies and the way I tend to take, hey, I built this once, give me the instructions to build it over and over again. That has gained us a lot of efficiency. And then the ability to upscale employees, right? So it’s like, I get a new team member, I get a contractor on my team. And I can say like, Hey, here’s an agent that’s already built, you can get up to speed much more quickly using this. So those have definitely been the case for efficiency. I think, I think the other thing, the second question, if I’m right, It was like, what else besides just the efficiency is that?

00:46:29.17 [Moe Kiss]: So, John, there’s a concept we talked about a couple of episodes and I keep it. It’s funny, Julie, that you mentioned it because I was going to bring up the exact same point. So, Jim Lysinki, I think is how you pronounce his name. He wrote a book, The AI Marketing Canvas, and he has this like quadrant thing and, you know, talks about internal productivity and that’s really where everyone’s focused. But the other quadrants are like internal growth. So, like, using tools to accelerate your workforce. Then there’s external productivity, which is a lot more of that customer service, how you can use it to have productivity gains that are for your users. Then there’s the fourth quadrant, which is really external growth, using AI to completely unlock new revenue streams. My observation is that everyone’s really stuck in that internal productivity quadrant. From what you’ve shared, it sounds like you’re also using it for internal growth. Is that a thing that you’re seeing play out where the productivity gains just seem to be the thing everyone’s so anchored on? The thing I also then want to understand is if you are having productivity gains, how are you measuring that?

00:47:39.66 [John Lovett]: So with, I mentioned earlier the horizon builds that we’re doing, every horizon build has assigned productivity metrics. Like how much time did it save? How much money did it generate? We have KPIs that we build. You guys know I like KPIs. So we got KPIs that we build around each one of those things. So we are measuring productivity in a number of different ways. I think with the growth, this is an interesting one because it is sort of a creeper. It moves more slowly than just the productivity gains. But one example I’ll give to you. So I mentioned that we record all our calls. We ask our clients, can we record these calls when they allow us to? We do. So we’ve got all these transcripts. And so my My head of BD comes to me and says, hey, I’ve been talking to this prospect actually since October. And we’ve had a dozen conversations. I built a notebook LM that contains all the transcripts, contains what we talked about. And now, here we are in January or February, and they just asked for, we think we need to include analytics in the scope. And so everybody’s been talking about this project. We’ve got pricing calculators. We’ve got scopes of work. We’ve got all these things. And he basically said, I need you to get up to speed on this. And so I was able to use all of the resources, the transcripts, what the client wanted. I did get on one call with the client and talked to them and got to ask my very specific questions. But immediately after that call with having no prior knowledge, I was able to write a scope of work and my BD guy came back to me and he’s like, holy shit, John, you nailed it. Like, I can’t believe that you got that figured out in such a short amount of time. And we didn’t even talk about it. Like he just gave me the resources, but I was able to plug in and use my tools to be able to say, what does the client need? How does that match up, match up with my products and services? And then what can we offer them that’s going to fit what I heard in our conversation? And so that for me was a growth moment where I could say like, that really not only did it save me time, like it would have taken me months to get up to speed, but I was able to turn that around in like 24 hours and get something that was so spot on that my BDO was like, that’s amazing. And hopefully fingers crossed that, you know, that deal comes through, but it was just a good growth moment. That’s one example that I can think of there. I think the other thing I’ll just mention, you know, productivity is, Obviously where you wanna go, the part of this as being analysts, there is a whole new discipline and we call it geo, but it’s AI search, right? So it’s like, hey, we got all these models, people have questions, we’re moving toward this zero click world where it used to be, you’re ranked on a, on Google or Bing or wherever, somebody saw your link at the top and they clicked you, and they got a visit to your website. Now, your brand is getting surfaced via these LLM models. They see your brand, and they may not get cited. And they’re like, OK, I’m narrowing my list down. I’m seeing these things, but I’m not even clicking through. And I’ll just type in direct to get to that brand’s website. And so for me, part of this being that curious analyst and I’m collecting all this data and writing prompts and developing prompt methodologies, I’m like finding wild stuff. One example was, hey, in Claude, we see mentions, which is like your brand is mentioned, and then citations, which is the link to, you know, whether it’s a podcast episode or your resources, whatever it is, like all of a sudden in Claude, all the citations dropped off December 1st. And I’m like, what happened? And just because I’m looking at all this data across all my clients, I was like, oh, Claude really did stop using citations at that point in time and just cut it off. And then the other example, I’m analyzing data. I’m trying to build a report for a client. And I see the data went back to December 15th, 2025. It was the week that ChatGPT announced they were gonna start having ads in their free accounts, right? So you won’t have them on enterprise or paid accounts, but the free accounts are gonna start getting served ads. And I actually looked at the data and I was like, what is going on here? I started to see the nature of the responses change to purchase intent-driven responses. they were actually preceding the way that chatGPT responses to same questions we were asking like months ago, they were changing this dynamic. And I saw all these changes in the responses. And I was like, something’s going on here. And I connected it to the fact that they just introduced ads. They are prepping, they have been prepping for like a month and a half, you get ready for this. So I mentioned that because There is so much to learn about how these models operate. And it’s kind of like going back to like search days when you’re trying to understand the algorithm, like we’ve got this whole new field of who knows what the hell’s going on within these models is up to us to, and if anybody tells you they do, like I’m calling bullshit on that because All we can do right now is experiment, test things, try things and see what works. Honestly, in my 20 years in analytics, this is the most fun I’ve had because I’m learning new stuff, I’m playing with new tools, I’m getting to see all these things. For me, that’s growth. I am growing as a professional because my tool gets expanding, I’m learning all these new things. I can tell clients, if I can surprise and delight a client by saying, look at something I found about you. I’ll just give you one example. My client said, hey, this blog post just popped. Over the summer, it started ramping up and ramping up. We get all this traffic to it. It’s amazing. We’re so stoked on this. And on the call with the client, this was like a regular status call, I looked up their prompts and their geo-reporting, and I found the URL that they had referenced. I said, wow, this is amazing. The last two weeks, you guys have gotten 102 citations on this particular blog post. But yet all of those responses, nobody mentions you. You’re never mentioned in this set. And the category was like risk management. They had become this authority on risk management that enterprises were citing, brands were citing, you know, forums were citing, all these people were citing, but their name never got associated with that because it just wasn’t in there. And we developed a simple task for like, hey, just don’t do it in a pitchy, salesy way, but just insert your brand name into You know, here’s a description of what this is, and by the way, our products solve for this. In your FAQs, I had a big FAQ section in the blog post, I said, hey, just enter your brand name as, you know, when you’re closing it out, say like, we do this, and here’s the product that delivers this. Within two days of that test, mentioned started showing up in AI Overviews, which is one of the fastest to pick up on changes to websites. And I was like, boom, proof point right there. I got the first signal where it was like that change that they made to their content pages produced a mention which had never been seen before out of like six months of testing. And so, you know, that was only like a couple of days into the process. So when I can show a client like that, and then I did another analysis yesterday morning and I’m like, okay, signal strong. And it led me down this whole other rabbit hole of like understanding mentions and citations. And I learned about ghost citations, which I won’t get into. But like, this is the fun stuff where it’s like, we’re curious analysts, we’re trying to figure stuff out. And never before has there been this playground of like so much data, so much information. that we can just dive into and show clients things they’ve never seen before. And that for me is, I think that’s the growth that, you know, it’s gratifying, it’s fun, it’s exciting, and it’s definitely keeping me going.

00:55:25.19 [Moe Kiss]: Oh my God, John, this is like positively infectious. I love it.

00:55:28.76 [Michael Helbling]: Yeah, I know. I’m trying to remember a time when I’ve seen you like this fired up, John, honestly, like I’ve known you for a long time. This is great.

00:55:37.35 [Moe Kiss]: But so like you’ve had, I guess, I’m going to say the privilege of approaching this as a leader who then is trying to like bring your team along. There are lots of folks, I would say, who probably have the same level of enthusiasm as you, but might not be in a leadership role. What advice would you give that person, that mid-level analyst who’s doing all this playing, they’re having lots of fun, but how can they have a ripple effect on their business if they’re not in a leadership role?

00:56:05.44 [John Lovett]: Build something cool, show it to somebody. Show it to your manager, show it to your boss’s boss. If nobody picks up on it and you think it’s brilliant, share it on LinkedIn, share it on Measure Slack, share it somewhere, get some feedback on it. And if you get that positive feedback where people are like, this is cool, we’ve actually done this with our blog posts. at SEER, we’re almost not allowed to write a blog post anymore until we’ve seen something on LinkedIn, like see any of your reacts, see if you get any comments, see if you get any mentions on it. So test it, like play with it, put it out there, see what you get as a response. You know, and this may be harsh, but if you’re an organization and you built something that you know is productive, adds growth, is clever, is adding to what you do, and your leadership doesn’t recognize that. I’d be time to look for new leadership, but it is hard. I would just encourage people to experiment with things, build things within your boundaries that you’re allowed to do, and then share. put them out to the world. And if your leadership won’t listen, take it to LinkedIn, take it to Measure Slack, take it somewhere that you can find an audience that thinks that’s cool, and you’ll grow your brand that way, you’ll be able to find your people, I guess.

00:57:21.29 [Michael Helbling]: Yeah, that’s great. All right, we do have to start to wrap up, unfortunately. This is so good though. All right, well, one thing we love to do is go around, share a last call. AI is never going to change that. Well, maybe it will, I don’t know. But John, you’re our guest. Do you have a last call you want to share?

00:57:39.20 [John Lovett]: Well, I have two quick ones, but I guess I need to ask permission. Am I allowed to offer another podcast? Yeah, of course.

00:57:45.93 [Michael Helbling]: Yeah, come on. Do you think we follow rules around here?

00:57:49.53 [John Lovett]: I would bring it anyway, but the artificial intelligence show is a podcast. It is run by, I want to make sure I get their names right. Paul. Paul Ritzer and Mike Kaput. And every Tuesday they put out a podcast and they aggregate all the most recent AI news. And it’s brilliant. My wife actually loves listening to it with me in the car. We listen to it a lot. And she’ll talk, she’ll be like, oh, their voices are so soothing. But just great intel, great information. This is also the company. I think their company is changing brands, SmarterX. They were on the MACON conference in Cleveland, I wanna say. Cleveland, yeah. Yeah. Yeah. And Julie, you need to get there because it’s all in Cleveland. Yeah, it’s a great conference. But that is also the training that everybody at SEER was required to take was piloting AI. So great resource they’ve got. They do a free one-on-one training on a bunch of different things once a week where you can tune in and ask questions. But just a fabulous podcast, a fabulous resource, definitely worth checking out. And then my second one, a very quick hit. I encourage everybody on LinkedIn. There was a community that started, and I just happened to see it that was called the Geo Community. And Geo stands for Generative Engine Optimization. Some people call it AI Search. Some people call it all sorts of different stuff. I just happened to be, I saw it. I was like, that seems cool. And the first couple posts, were very intriguing to me, and I started commenting on it, and all of a sudden, I want to get a Rohit thing as the founder, and he’s like, hey, would you want to be an admin on this and join me in kind of managing this community? So I think we’re only a couple of hundred people strong, but if you’re curious about Geo and all that stuff, I got super excited about learning. Check out the LinkedIn Geo community, the Geo community, good resource to get up to speed.

00:59:48.66 [Michael Helbling]: Nice. Excellent. Okay, Moe, what about you? What’s your last call?

00:59:53.42 [Moe Kiss]: Well, I am quite excited. Probably not as excited as John, but my good friend Eric Weber is… back writing, and I’m super, super pumped about it. So he has a great blog from Data to Product On Substack, and I get it via email. The latest one was the Conundrum on Buy versus Build, which is something I always am super interested to read about.

01:00:18.51 [Michael Helbling]: Awesome. All right. Julie, what about you? What’s your last call?

01:00:22.57 [Julie Hoyer]: Okay, my last call is totally not AI industry related at all. My life the past few months, you know, I’m just trying to keep my eyes open in the middle of the night with a little baby. So I’ve been doing a lot of reading. So my last call is I went down a path of reading some historical fiction books. And I read one that was really good. So if anyone’s looking for some new reading material, a little break from AI news, maybe, you know, switch it up. It was, and I know this is popular, but it was codename Helene. And it’s by Ariel Lohan, if I’m saying her last name right, but either way, really great book. It is about a British spy going to France near the end of World War. So it was a really like interesting take, a different storyline that I had not really read about. And it was just an awesome breed.

01:01:16.18 [Moe Kiss]: Julia, I’m going to sidebar you after this and send you the name of an author who’s written like six books very similar to this. I’m going to read this one, but I’ll send you mine too.

01:01:22.79 [Michael Helbling]: We got a whole other podcast going here. Yes, I have a last call. So we heard about this and we’re kind of think it’s really cool. There’s a new visual data visualization contest, but it’s for children. So if you have a kid between the ages of seven and 12, there’s two different age groups. I know. So not everybody’s kids fit into that category.

01:01:47.26 [Moe Kiss]: I can fake, he’s tall, I can fake his age.

01:01:49.93 [Michael Helbling]: Yeah, whatever you wanna do. It’s like Aussie age, you know, it’s different. It’s, yeah, the conversion. All right, anyways, we think it’s a really cool idea. There’s some really great advisors behind it, but they’re doing a data for kids visualization contest. And it opens, the contest opened literally yesterday before the show comes out. So there’s still time right now. You can go jump on their site, we’ll put it in the show notes and you can check it out. But if you have a kid in that age group that’s really different age brackets, I think between seven and nine and 10 and 12. And so you can kind of work with your son or daughter and just come up with a cool data viz together and might be a fun little project. So anyway, that was my last call. All right, John, what a pleasure. Thank you so much for coming back on the show. It’s so good to talk to you. It’s been fun. It’s been great talking to you all. It’s, yeah, and I know we’re gonna see you at Marketing Analytics Summit, right, in April, so. Can I do a team look forward? Am I allowed to do that? Yes, of course, yes.

01:02:53.57 [John Lovett]: Absolutely. The extra day till Thursday, I am doing a half-day workshop on conversational analytics and how you can connect your GapGPT LLM of choice with BigQuery or Google Analytics, and so you’ll see it live there. Nice. At the Marketing Analytics Summit in Santa Barbara.

01:03:10.15 [Michael Helbling]: It’s funny, John, your blog post inspired me to create my own conversational analytics integration with Google that I built myself. Because I was like, hey, I should try to build something like this because, you know, I read your blog post and I was like, yeah, this was pretty, pretty cool. And I made some cool things out of it. Anyways, so I want to kill you. And it didn’t kill me. And I’m okay. I did stay up until two o’clock in the morning one time working on it, but that’s the fun part, I guess, you know. No, but you don’t have to stay up until two o’clock in the morning to come to Marketing Analytics Summit. And there’s a couple of really important things about that. One is it’ll be April 28th and 29th. So John will be there, we’ll be there. And we want your questions. We actually have a survey live right now. You can go to analyticshour.com. IO slash listener. Did somebody get that right? Yes, listener. And take our survey. And then you can submit questions that we will answer on the show, hopefully. So that’s kind of out there right now. We’d love to hear from you what questions you have to answer live at Marketing Analytics Summit. So that’s coming up. And so don’t miss that. We also love to hear from you every other witch away too. So please reach out to us. If you’re doing cool things with AI, if you’re inspired by some of the stuff you’re hearing, of course we’d like to hear from you. Obviously, when talking to John, it sounds like John, you’re pretty active on LinkedIn. So that’s a great place to find you and follow what you’re doing and interact with you there. And then also in the Measure Slack chat group. And we also love to hear from you via email contact at analyticshour.io. So please reach out. And we have stickers and Tim loves sending them out. So you can ask for stickers too. So just send us a little note. All right, I know that I speak for both of my co-hosts when I say, no matter how AI is changing your work and no matter how you’re getting your processes rolled up, hopefully it’s being both efficient, driving efficiency and increasing productivity. But remember, keep analyzing.

01:05:20.12 [Announcer]: Thanks for listening. Let’s keep the conversation going with your comments, suggestions, and questions on Twitter at @analyticshour on the web at analyticshour.io, our LinkedIn group, and the Measure Chat Slack group. Music for the podcast by Josh Crowhurst. Those smart guys wanted to fit in so they made up a term called analytics. Analytics don’t work.

01:05:44.69 [Charles Barkley]: Do the analytics say go for it, no matter who’s going for it? So if you and I were on the field, the analytics say go for it. It’s the stupidest, laziest, lamest thing I’ve ever heard for reasoning in competition.

01:05:57.91 [Tim Wilson]: Tony? No. None of this in the outtakes. None of this. None of this.

01:06:01.92 [Michael Helbling]: None of this. Yeah, that’s fine. It’s yeah, that’s fine.

01:06:07.96 [Moe Kiss]: That’s my hopes. That’s my thinking face. Like, what do you want me to do with that?

01:06:12.49 [Michael Helbling]: Moee, I’m just it’s fine. And people know who we are now. If our listeners are like, I can’t believe they didn’t look engaged enough in this short video that they put on their website. I’ll be like, you know what? That’s why we can’t have lights things.

01:06:28.68 [John Lovett]: I love the images you guys are putting out there. They’ve been fun to watch.

01:06:34.74 [Michael Helbling]: Oh yeah, thanks AI Studio, Google AI Studio, Nano Banana Pro. I just, it’s, what’s hilarious is like, I don’t even have good pictures of all of us. I just grab random headshots and throw them in there and be like, make a picture of this. That’s pretty good. I don’t know if John, if Tim shared the video I created with Vio of him and I, Crip Walking, but we’re not going to put that on social media.

01:07:01.19 [Tim Wilson]: That’s in the, that’s in the slide channel.

01:07:03.87 [Michael Helbling]: Yeah, that’s in the slide channel.

01:07:05.41 [Julie Hoyer]: That’s the only reason I joined the, the Mass Life channel. Cause I agreed that there was some fun happening.

01:07:10.52 [Michael Helbling]: And I created an analytics power hour brain at 40 else that I’m holding while we’re doing it. So nice.

01:07:18.87 [John Lovett]: Nice. Oh my God.

01:07:19.29 [Michael Helbling]: It’s you, it’s like imagery wizard. Oh, oh no, John, you don’t understand like, I’m fully AI enabled at this point. Like, it’s a problem.

01:07:32.12 [John Lovett]: AI enabled the dangers.

01:07:33.34 [Michael Helbling]: It’s not good.

01:07:42.54 [Moe Kiss]: Rock flag and review your workflows first.