#292: AI Without Adult Supervision with Aubrey Blanche

As Kevin McCallister once taught us: just because the house is still standing doesn’t mean everything’s under control. Everyone’s racing to adopt AI, but has anyone actually read the fine print? For this year’s International Women’s Day episode, we are joined by Aubrey Blanche to unpack the hype, the hidden tradeoffs, and the quiet ways teams are giving up agency in the name of “productivity.” We explore how data and tech teams are uniquely prepared and positioned to ask better questions, measure what really matters, and avoid letting the AI teenager run the house. Learn more about “phantom value” and why faster isn’t always better… or even cheaper!

This episode is brought to you by Prism from Ask-Y—your agentic analytics platform for automating analytics, exploring data, creating repeatable workflows, and delivering accurate insights—all without the need for manual query writing.

Links to Resources Mentioned in the Show

- The Adolescence of Technology: Confronting and Overcoming the Risks of Powerful AI

- Exposed Moltbook Database Let Anyone Take Control of Any AI Agent on the Site

- Disempowerment patterns in real-world AI usage

- AI safety is not a model property: Trying to make an AI model that can’t be misused is like trying to make a computer that can’t be used for bad things

- The AI Marketing Canvas, Second Edition: A Five-Step AI Plan for Marketers

- Should your AI notetaker be in the room?

- Heated Rivalry

- I Don’t Care What You Build (And Neither Should You)

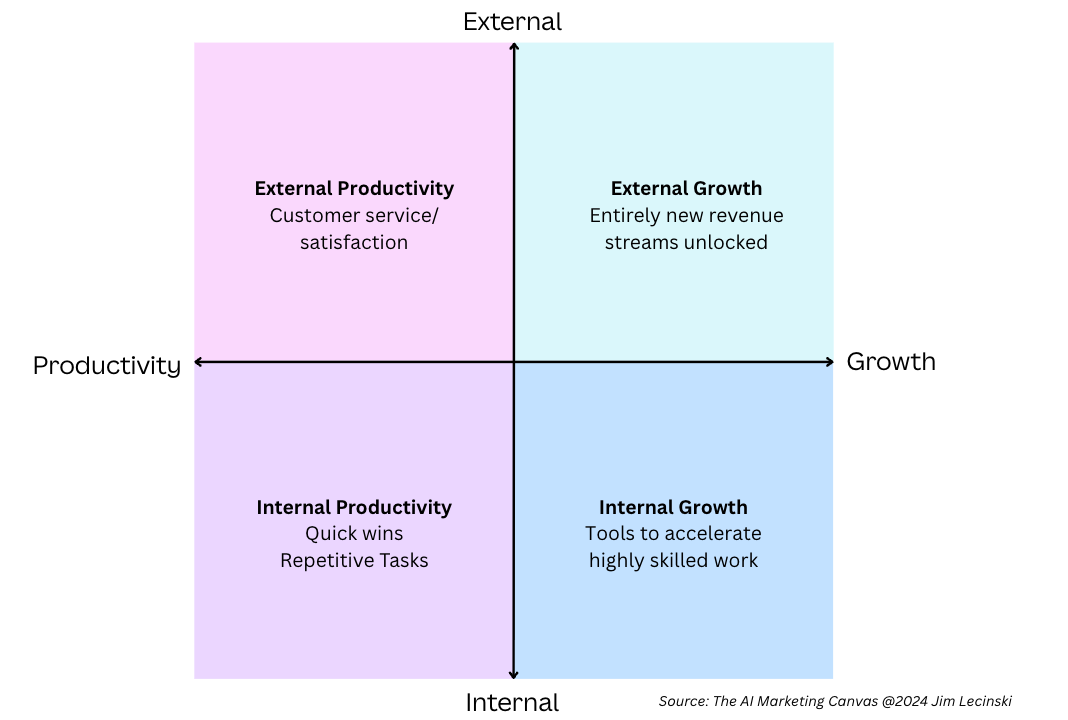

And the diagram Moe referenced:

Photo by Johanneke Kroesbergen-Kamps on Unsplash

Episode Transcript

00:00:05.75 [Moe Kiss]: Welcome to the Analytics Power Hour. Analytics topics covered conversationally and sometimes with explicit language.

00:00:14.99 [Moe Kiss]: Hey, everyone. Welcome to the Analytics Power Hour. This is Episode 292. The world of AI is moving lightning fast, and I think it’s fair to say that most of us are struggling to keep up. I know I am. There’s new tools, new capabilities, new risks, new headlines, and they seem to land pretty much every week. It’s getting harder to separate what actually matters from the hype. So for this episode, we want to chat deeply about what all of this means, especially when it comes to ethical AI and the real world conundrums that we’re all facing in tech right now. If we’re honest, it feels a little bit like we’ve left the teenager home alone and that teenager is AI. The house is still standing right now, but maybe it needs a bit more supervision. But before we get into it, let me introduce my co-host, Val Kroll. Great to have you here. Hey, Moe, excited to be here. I know. And this is actually our special International Women’s Day episode. So we have an all women class today, which is awesome. But it’s also really fitting because today we’re going to welcome back a guest who joined us many years ago for another important conversation about creating balanced teams and avoiding group think. So we’re really thrilled to have Aubrey back on the show. Since her last appearance, Aubrey has remained a sharp and influential voice at the intersection of technology, power, incentives, and human impact. She’s held senior leadership roles, including as a director at the Ethics Centre, a VP for equitable operations at Culture Amp, and as the global head of diversity and belonging at Atlassian. She’s also served on lots of influential boards and advisor roles in the tech community. She’s currently completing a master’s of AI ethics in society at the University of Cambridge. So, with that amazing wrap, so far to say, she’s been spending a lot of time thinking about AI, about agency, about risk, about what responsibility actually looks like for the people building and deploying these systems. And I’m so excited to dig into what’s been on her mind, what she’s been reading and what she’s been thinking about. So, Aubrey, massive welcome back to the Analytics Power Hour. To kick us off, what the current state of AI. Let’s just open it up. What feels like the most important bit to get stuck into?

00:02:34.48 [Aubrey Blanche]: Oh, my gosh. I love the metaphor you’ve used about the teenager. I have to say, there is no greater joy for a neurodivergent person than someone asking about our special interests. And I think it’s mine, so I’m so happy to be here today. But for me, I think the headline of what I’m trying to convey is actually about working with more intention in AI. So right now, there’s an incredible amount of hype, and in that hype, there are narratives that are just fundamentally untrue. So, there’s this narrative about inevitability. Now, the reality is the teenager exists. Now is not the time to debate whether we should have a teenager because the teenagers are in the house. But I think that the idea that AI is going to do XYZQ is actually not written yet. Yes, there are structural incentives that make certain things more likely. But that doesn’t mean inevitable. And so I think if we all embrace this idea that the way things have gone is not the way they have to go is a really powerful counter narrative to what folks who quite frankly, you know, the commas in their bank accounts depend on you believing that AI is inevitable and going to create value and things like that. And I’m not sitting here saying that can’t happen, but for my professional expertise, having scaled some of the most influential tech companies in the world, I actually think the way people at multiple levels running tech companies, certainly legislators in Australia are thinking about this, actually decreases the likelihood that we get to the good stuff and increases the likelihood that we get to the bad stuff. But again, we can choose differently.

00:04:12.80 [Moe Kiss]: Okay, so what you’re saying is that at the moment, people think it’s inevitable that AI is going to be very influential, have all these amazing outcomes. But the way we’re approaching it right now actually increases the likelihood of the less good outcomes, but not necessarily the good stuff. Is that what you’re saying?

00:04:32.20 [Aubrey Blanche]: Yeah, so let’s take like there’s this idea around that AI makes us more efficient and productive. Okay, so that’s not like on its face completely false, but it’s actually incredibly dumb way to conceptualize the goal of AI. So if the goal is efficiency, what you’re saying is, I’m wedded to the status quo, I just want to do it faster. And it takes a very particular person to think the status quo is sufficient is the way we want to run the world, right? Like you have to be having a pretty good time. Lots of those comments. Lots of those commas or you just like aren’t likely to get killed by police walking down the street. And so, but I think what if we flipped it on Ted and we said we actually believe that AI can be used for increased innovation and value. Right. And efficiency might be one of the tactics that we use in certain scenarios to achieve that, but efficiency itself is a bullshit objective. And so when I see, you know, in the case of like a national plan around AI that’s specifically focused around productivity, what I don’t see is consideration around the questions of what are we producing? Can we produce new things that actually are of higher net benefit to a broader set of society? Like we should be having those questions, but because I think there is such a gap in understanding how this technology works and the implications between folks who are sort of running companies that are building it and those that are trying to regulate and govern it, we’re not having the productive conversation we could have. And at the end of the day, I think it means we’re not going to get access to a lot of the benefits that are possible. And so part of my prescription for solving that, because I’m not seeing leaders, quite frankly, act in a way I’d like to, is that we each take on our own little sphere of influence and say, how do I make principled decisions about the use of this technology in the sphere that I operate in every day? Because that actually does make a difference.

00:06:42.23 [Val Kroll]: So I’m curious if the reset on the objective, is that one of the ways that we can be more intentional upfront? Is that one of the things you’re thinking about in that space?

00:06:52.26 [Aubrey Blanche]: Yeah, I think so. Because I think if we agree that the objective is innovation or human flourishing, then we can then say, oh, it’s not about I’m going to throw AI on everything. It’s about saying, what’s the class of problems for which AI is an excellent tool? and what is the best way to use that technology in that use case to achieve that objective. It completely changes the analytical frame. It doesn’t mean we run away from the technology, but it does mean that we probably aren’t yet chasing phantom value that there isn’t a lot of empirical rigor to suggest that it actually exists. That’s the thing that shits me a little bit is everyone’s like, oh, we can reduce our workforce. Okay, maybe that technology will work, but it lies to you a lot. And so like maybe you don’t want to get rid of the humans quite yet, even if it looks good for your P&L and your ASX quarterly results.

00:07:52.95 [Moe Kiss]: One of the things I read recently, and I can pop something about it in the show notes, it was by Jim Lysinki. And he had kind of like a framework for thinking about this, which is like a quadrant. And it’s like basically like in the bottom left quadrant, he’s got like internal productivity. So quick wins, repetitive tasks. And like in the top quadrant, is really about external growth. It’s about entirely new revenue streams, addressing new customer problems. Really innovation is the way that I would think about it, that bucket. And one of the things, it was a very marketable way of framing it. But I really like the way that he thinks about, we need to get from that bottom quadrant to that top quadrant. And I feel like there’s a lot of commonality with what you’re saying here as well about, We’re doing the dumb shit with AI right now.

00:08:49.19 [Aubrey Blanche]: Yeah, and I’m not saying that we can’t, because I’m happy to share this, but I just wrote a piece about the ethics of using AI note takers, because I tortured myself about it for a while. And then I started using it, and I was like, wow, this is really great for my brain. And so that is an example of where something that’s basically become automated is actually a value add for me, because I’m doing more interesting stuff with it. Yeah, like that’s not an innovative, like I’m not creating something new with that. And so yeah, but I haven’t seen that, but I would agree that I think there is just more that we can do. Now, I have a classmate at Cambridge. She’s incredible. Her name’s Oya. And she talks about this, like the idea of co-intelligence. is that so many people think about AI as replacing a human, which is this very capitalistic, I’m just trying to reduce my operating costs question. But she does the most interesting, amazing stuff, but her contribution is that she talks about co-intelligence, is that looking at the way that humans think and the way that machines operate and working together actually creates more value for organizations, So in that way, these ideas I think are just not being talked about because people are so focused on the short-term returns that they’re getting. But I think if we start to optimize over longer time horizons, these ideas around experimentation and innovation and value creation potential actually expands those possibilities.

00:10:26.53 [Val Kroll]: Does it seem like, I guess my perception, I shouldn’t say it doesn’t seem like, my perception is that lots of organizations focus on some more of those productivity or replacing the headcount. not only for the P&L purposes because it feels safer because it’s like, oh, it’s behind closed doors. It’s not like I’m throwing a chat bot out there that’s going to do something dangerous to my customers or make promises or hurt my business in any way. It feels like a safer way to test it out to expand into those areas. First of all, I question if it’s actually air quotes safer to be doing any of that because it’s still playing with resources and people, but is that why organizations start there or what’s the common thread between being that bottom quadrant before they could start to enable more of the air quotes good stuff?

00:11:16.90 [Aubrey Blanche]: Yeah, I think part of it is a lack of appetite for risk taking, or risk taking of a particular type, I should say, because the way that risk is thought of. So there’s some research, and I’m sorry, I can’t remember the citation, but they were talking about how 80% of corporate leaders felt that they were behind on AI. But when you looked at the data about where their companies were on AI adoption, they were either averaged to slightly ahead. And so people’s beliefs about what’s happening and what’s actually happening are quite divergent when it comes to the use of AI and organizations. And so I think there’s a particular kind of organizational risk management where, yes, of course, no one wants to put in a chatbot that starts identifying as Mecca Hitler, which you can Google, and the answer is Grock. But yes, so I think there’s a particular corporate risk of saying, because the reality is once you get into that innovation quadrant, like the percentage of things that fail goes up and they fail in unpredictable ways. So this is something when we’re talking about generative AI in particular is a known property of these models. like large AI models fail in unpredictable ways. And so the level of risk that an organization is taking is high. Now, I would say there are certain types of risks that aren’t necessarily being managed in the same way. So I rarely see corporate leaders, unless they’ve specifically engaged me to talk about this, considering the risk of Earth’s dwindling, you know, freshwater supplies, like when they’re thinking about AI adoption in their organizations. And so that’s something I would say is, again, I just think there’s a conservatism in organizations as holding them back from achieving the benefits and also having principled and hard boundaries about places we shouldn’t be using this technology or shouldn’t yet be using this technology.

00:13:15.09 [Moe Kiss]: How like, I don’t know, I think one of the things is like I was prepping for this episode. A lot of what was rolling through my mind is like it felt a lot like the privacy debate of a few years ago, where like individuals would give up their their privacy for like little personal wins. But if you’re a big corporate, maybe you have to be more stringent. And this feels like a similar space where people are willing to accept a shittier output for something like low value, but high value. Actually, the stakes are higher. I guess what I’m trying to really conceptualize. When you’re in a technology business, how do you think about those higher fidelity problems and what the guideline should be of where it’s acceptable to use it here? How do businesses do that other than just paying you lots of money, which I highly endorse is a good decision?

00:14:10.12 [Aubrey Blanche]: Oh, thank you. I mean, one of the things, I was just chatting to a pro bono client yesterday, and they’re a particular organization in that kind of an off the shelf like AI governance framework and like decision making principles like is not appropriate, like we have to do something fully custom. But in my mind, the way to like get to this is first to like craft an organizational perspective on AI use. So whether you call that acceptable AI use and AI policy, But that should detail kind of the vision and the general beliefs that you have about how this technology is used, probably have a set of principles that guide particular decisions. Then I think you should have pre-worked through at least a handful of anticipated scenarios that are going to come up. But then you also need to do enablement for employees, not just on how to technically use the tools, which I think is important, which has to include safety and responsibility behaviors, But also, actually, most importantly, teaching the individuals within the organization how to apply the decision-making framework that you’ve made. It should be grounded in your values, the particular positioning of your organization. And that’s something that I love. I obviously do this in my consulting with AI, but at the Ethics Center, one of the reasons that I joined was because when I was chatting to Simon, the executive director, one of the things that really struck me was he emphasized that at the ethics center, our mission is to bring ethics to the center of everyday life, but we teach people how to think, not what to think. And I think that we need to take that principle into AI because the reality is so many of the problems and the challenges that people face around this technology is because they actually haven’t been given a framework of how to make decisions within their scope. And I think there’s a special risk because most of us have not grown up actually being caught ethical decision making in particular. And so there’s a skills gap in the workforce to actually be able to, and there are ways to Think about ethics and responsibility in a structured way, but most people haven’t been exposed to a framework or a process to be able to do that for themselves.

00:16:22.21 [Val Kroll]: Michael, how many tabs do you have open right now?

00:16:25.62 [Michael Helbling]: Oh, I’d say enough to qualify as a distributed system and probably a cry for help.

00:16:31.69 [Val Kroll]: Well, same. If you’re an analyst, you’re basically full-stack now. Excel, BigQuery, SQL, dashboards, plus explaining conversion like it’s a bedtime story.

00:16:44.14 [Michael Helbling]: Yeah, and every tool wants the same context over again. Which table? What’s revenue? Why is July doubled? Okay, sure. Whatever. I guess I just live here forever now.

00:16:57.34 [Val Kroll]: Well, that’s why we’re hyped about Prism by Ask Why, the AI analyst moment. You ask in plain English and Prism orchestrates across your stack, queries, views, charts, all without the constant tool hopping.

00:17:10.04 [Michael Helbling]: Yeah, it’s context-focused too. It remembers your definitions with the jam memory. I mean, it will literally hang onto what does conversion mean in your world.

00:17:19.36 [Val Kroll]: And you can save your best workflows as skills, portable expertise you reuse anywhere, like clean GA4 medium field variations so you don’t reinvent the same duct tape logic weekly.

00:17:31.39 [Michael Helbling]: Yeah, and there’s community skills. Stuff other analysts have already proven works. So you don’t end up debugging a formula. Sometimes it looks like some kind of ancient ritual or something.

00:17:43.37 [Val Kroll]: Prism also does SQL views with version control, so you can save, commit, and rollback changes like a responsible adult.

00:17:51.69 [Michael Helbling]: It sounds amazing. It’s built by analytics practitioners, and there’s free early access while they continue to refine and build the product.

00:18:00.60 [Val Kroll]: So to go see for yourself, go to ask-y.ai and join the waitlist.

00:18:06.12 [Michael Helbling]: And the best thing about it is use code APH and that will jump you to the top of the waitlist. That’s ask-y.ai with code APH. Just think about it. Fewer tabs, more answers, same chaos, but this time more organized.

00:18:25.10 [Val Kroll]: But how does an org, I guess like, you know, outside of bringing in people from the outside, I’m just curious about who inside of an organization is best poised or could mobilize to think about the valuation of those types of risks. I like how you said in scope. Everyone’s role probably has a different amount of risk that they should be allowed to take or comfortable taking, but how do you start to think about assessing that risk?

00:18:52.71 [Aubrey Blanche]: So I think it’s, and there’s so much debate in kind of academic circles about like where accountability sits and how governance structures work. And so I think it really, but for my, I hate to say the answer is a committee, like in general. But I kind of think that in that you need a set of people to think about these risks. You need folks who are actual risk management professionals who understand those processes, but you also need an ethicist who understands the use cases and market that you’re in. because the risks of AI are so unique to how it’s being used. There’s a side note that AI doesn’t mean anything. It’s like a giant bundle of technologies doing a bunch of stuff. You need an ethicist who is qualified to speak to you about those particular issues. You also need someone to represent the customer or the external face of the company because there are major reputational risks and considerations in this. as well. And then you probably need some technical folks who can reign us in when they get a line about what’s actually achievable. So if we’ve decided philosophically this, we’ve made an operational decision, but what does that actually look like in terms of developing or delivering a product or in putting a tool in the hands of our employees? I recently learned about a company that’s based in the UK, that they are incredibly rigid about their responsible AI approach to the point where any employee at any time can raise an ethical issue about something they’re doing with AI that can actually be deferred to an ethics council for debate and dissolution. Wow. So really, really cool. And so I offer that as an example that like If they wanted to, they would in the sense that like, yes, we can’t mitigate our risk. I’m not trying to say that, but we could do much better than we currently are if companies had the will. And as someone who spent a lot of my career in DEI, so diversity, equity, inclusion, doing kind of anti-discrimination and social justice work across tech, like the number one factor that I have seen in whether programs that are about responsibility and ethics and social justice, et cetera, does the CEO care enough to keep it funded when every other incentive in the company is to shut it down?

00:21:17.94 [Moe Kiss]: I want to push you a little bit because I feel like folks will be like, listen, this episode will be like, yes, I want to do this. I want to go into my organization. And someone is going to say this. And I’ve sat around with you and debated many a time. And I know I’m going to say Aubrey is probably like the best, best person ever to have a discussion with because she’s so good at like reframing things. So sorry, I’ll turn down the fan girl right about now. But so someone in the organization will be like, yeah, cool. We can build some frameworks and guidelines. But AI is moving so fast. By the time we build the guidelines, they won’t be relevant anymore. So how would you handle that conversation?

00:21:58.42 [Aubrey Blanche]: Okay, I kind of think it’s silly. I wouldn’t say that if I was in an actual debate because I care about influence and changing people’s minds. But no, so I think that’s true. But that’s why I think for me, and there is debate about this, so I don’t want to act like it. But like, for me, principle-based frameworks actually solve some of that problem. because the idea is if you get into a framework where it’s like this is in this is out and you have a laundry list. Yes, that’s going to get stale really quickly because the way the technology works is going to change fundamentally or or the way it’s being deployed is going to change really quickly. But the idea of For example, a company could make a decision that says, we don’t deploy technology that makes decisions about humans into the market without having done thorough impact assessments measured for the potential of bias. and also developed a process for someone to alert us if something has gone wrong. You can decide that, and the underlying function of the technology changing actually doesn’t change that as a governance structure. The way you achieve those things may change, and so you need to be flexible and always willing to update your processes. But so yes, I do think it moves fast, but the idea that like, oh, it moves so fast, we just can’t do the right thing is like the bullshit that the tech elite has been selling us for decades, because it’s more convenient for them and because it maximizes their profits. And I want to say something really specifically. There is a difference between believing Profits should always be maximized as the primary goal and like we can maybe give up a little bit of that to not destroy civilization So there’s often this binary of like oh you like you hate money or like you want to make all the money in the world like no We could make principal decisions that yes may actually have some potential like marginal impact on profit but like I sometimes push leaders to say, are you standing behind the behavior that maximizing your profit is more important than the welfare of your employees or customers? And would you be willing to say that to the media? Because that’s the implication of your decision. And so I’d put that to folks to say, if you believe that, there’s probably nothing I can do to help you. But if that’s not what you mean, we can actually take different actions to align those values and beliefs. in a way that supports business, supports growth, but also balances the kind of risks that come off. So like the middle way is possible. And so I just want to call that out is like, it’s not one or the other. There’s a giant spectrum in the middle.

00:24:33.72 [Val Kroll]: I think that a lot of, especially thinking about analysts working inside of organizations are feeling disconnected from those larger implications when they’re deciding which note picker am I going to send to the meeting to pull on that strain from earlier. But I guess, is there anything that you would offer or suggest for someone inside of an organization that has access to use those tools internally, but maybe hasn’t been given a lot of guidance, but wants to be a good actor in all this, that maybe they’re not going to be the one to run up the flagpole to the CEO, that we need to be doing all these things, but is there anything in the middle for them that you would suggest they keep in mind?

00:25:16.80 [Aubrey Blanche]: Yeah, I think it’s actually the same kind of advice I would give to anyone who wanted to be kind of an advocate or an activist within an organization is look at who you are. So what’s your position in the world? And then what power do you have in the organization? So people often think of power as like formal power, like I can hire you, fire you, promote you. But things like, do you have relationships? Do people trust your judgment? That’s the type of influential power. And to say like, number one, make your decisions for you. So we’ll use the note-taker example, because I’m like all about it.

00:25:53.79 [Moe Kiss]: I’m going to ask you to like walk us through how you made those ethical decisions.

00:25:58.99 [Aubrey Blanche]: Sorry to distract, but. No, and I have a whole article that you can put in the show notes, but basically the like, Let’s walk through how someone says, okay, I can’t control this, but X tool has been white listed for or allow listed for note taking in my organization. I’m going to decide how I’m going to use it. So I’ve decided that there’s utility benefit to me, like there’s an obvious benefit, but there are harms in terms of potential privacy of data leakage issues if they’re training on my data or depending on where that data is stored. And so for me, when I walked through that, I said first, like, one, are they using my data to train? I don’t ethically stand behind companies using my data to train their models. My economic argument is that’s a resource they’re not paying for. I’m paying them for the service, and then they’re extracting value from me, like that doesn’t feel like equitable value exchange. It also exposes myself and the people that I have meetings with to potential security issues. So a lot of these companies are newer. They don’t have the robust security architectures that you would expect of enterprise tools. And also the use of AI creates security vulnerabilities that are often unanticipated and there’s a huge rise in like AI assisted cyber attacks. So data leakage risks are just higher. So for me, that meant, okay, I’m turning off data training in the tool that I use. It’s important to note that companies have an incentive to keep that turned on by default. So you have to go and turn it off. I think that’s an unethical design choice. I think the default should be off and people can opt in if they want to. It’s a dark pattern. Also thinking about, have I done my due diligence about the security practices of this organization? So I want to look to see if they have a SOC2 type one or type two certification, if they have ISO 27001. And then ideally, so those are like standard security control certifications. There’s also a new standard called ISO 42001, which is the AI management system standard. So this is something I would want to see, but recognizing that I think somewhere slightly north of 50 organizations in the world, it is believed have the certification at this time. I do want to give a shout out to Culture Amp. because they are one of the companies that has that certification. And I love that about them. But so those are the things I would look at. And then I’m thinking about how I’m gathering consent to record. So depending on where people are in the meeting, that might be a legal issue. But it’s also an ethical one that people need to be able to opt in. And so for me, the qualities that generate fully informed consent are one, everyone knows they’re being recorded. They understand the risks that they’re taking with their data and how it’s being stored. And also they feel full agency to opt out. And because the note taker I use doesn’t call into the meeting, so no one can see that it’s there, I explicitly start the meeting by saying, hey, I really like to use an AI note taker so I can be more present in meetings. I want to be thoughtful that this does not train on your data, but the data is stored in the cloud on AWS. So recognizing that if you’re boycotting Amazon, that might not work for you. If you have any problem with this, I’m happy to take notes by hand. So I start my meetings where I choose to use that, but I also only use note takers in meetings where there isn’t sensitive or confidential information being shared, so I don’t take any risk with that data leaking. But that process, yes, I’m literally an ethicist, so I do that, but you can follow people who do that pre-work for you. So you have control over whether you use that note taker and the costs and benefits but I would say like for me I curate my social media ecosystem with a lot of people smarter than me so that I for a lot of things can. kind of skip the deliberation process because they’ll walk through their thinking and I can go oh you shortcutted me and I figure out the ethical decision I wanted to make and that’s something that a lot of my online content does is I try to talk through how I’ve thought through a problem so that people can decide if like that’s the lane they want to go in also.

00:30:10.88 [Moe Kiss]: I love this. I love also how much personal responsibility you’re demonstrating because I think sometimes it can be really easy to be like, well, someone should give me guidelines and someone should sort it out. And like, I can’t do anything within my sphere of influence. And I really love to like stand some personal responsibility. I’m curious to hear your thoughts specifically as it pertains to data practitioners. Like, I think there’s another layer on top of that, which is we’re often privy to user data, first-party data. There’s a lot of ways that it’s unlocking incredible value for data folks. But do you think there’s another layer of dimensionality on top of that we should be thinking about, particularly in the data space?

00:30:56.04 [Aubrey Blanche]: Yeah, so I would say like the closer you are to sensitive or confidential information, the closer you are to harms. And so I think the level of personal responsibility goes up. But one thing that I’m really encouraged about by especially folks in the in the data space is that We’ve practiced for this before. When GDPR came into effect, the hygiene behaviors around privacy, the bar got raised in major ways. Obviously, if you’re not operating in Europe or on European citizens, but I think that the norms and practices around privacy, this isn’t actually fundamentally different. I hope that people would take a bit of hope in that and that they actually already have a lot of the skills needed to do this well. So this isn’t some, it’s easy to be like, oh, it’s an alien species. Sure, it’s kind of weird, but it’s not fundamentally different. And there’s a whole academic literature debate about whether AI is fundamentally special or it’s actually just a normal technology that mostly works faster. I tend to believe it’s more of a normal technology. And so to me, what that says is the skills and frameworks that we already have are useful. for governing and managing the risks associated with this technology. But I do think from a data professional perspective, the most powerful thing you can do is be open about asking what could go wrong and what do we need to do to prevent that. And I think if we just got in the habit of before we do take a moment of consideration to say, like, what’s the worst case scenario? Now, one thing that I will say that concerns me is that there’s kind of two specific issues that make answering that question correctly really difficult. One is that there’s a huge number of people who do not understand how AI works. I think data professionals, that is less of a risk. They just tend to understand the technology more. But the second piece, and I know this is now going on a half a decade of talking about this, but a lot of people in the data and text space don’t have the lived experience to accurately answer that question because the worst case scenario doesn’t happen to them. Wait, say more. So like, and this is an oversimplification, but like how many like Rooms full of data people are like a bunch of white dudes with no disabilities who like make over six figures. And I’m not critiquing them for those qualities, although I could. It’s that the likelihood that they have, for example, read a bunch of black feminist theory is quite low. And we know that black women are uniquely at risk of being harmed by poor deployment of these technologies. So it’s great that you build the muscle. to ask what could go wrong. But you also need to critically question your own ability to answer that question in a way that’s universal. So Lucy Suchman, who’s a feminist science and technology studies scholar, talks about the idea that people with a lot of power or privilege build things in their own image and assume that their experiences are universal. And so that’s something I want to talk about is we still need to talk about who’s in the room and what qualifications they have. to make those decisions. There’s also some interesting research being done by someone a year ahead of me and my master is looking at the demographic distribution of people in the AI ethics versus like more technical spaces, because AI ethics is actually much more female, much queer, much browner than like the technical things. And so there’s, again, this is why I say It sounds a little self-serving, but you need an ethicist in the room because the likelihood that the room is qualified to answer the ethical questions is quite low without them.

00:34:58.64 [Val Kroll]: I like that a lot. Can I ask another data, bring it to the data crew specific one? Cause that was, that was awesome. You mentioned something earlier about, um, phantom value. And I wonder if you could expound upon that a little bit is I think I know what you mean by that, but I would love to make sure that I, I understand. Is it just like a perception versus a reality or a lack of measurement to objectively say whether or not, you know, use case was valuable to the organization or yeah.

00:35:29.09 [Aubrey Blanche]: D, all of the above. So all of the above. So there is a couple of particular threads that are all contributing to that belief that I have, which is one, there’s not a ton of research. And part of it is because we only have a couple of years of LLMs rewriting the world, although AI as a concept has existed for a long time. depending on how you define that, which is, again, a very specific thing. That’s another episode. Yeah, like, people with PhDs debating what artificial intelligence means. And so I think there’s, number one, like, there isn’t a lot of hard evidence that, like, the primary benefit of using AI is, like, increased financial return specifically. Like, the data is just pretty thin that you can draw a direct line between, like, throwing AI at a problem and I’m suddenly making more money. Again, if you lay off 20% of your workforce, that’s probably going to happen, but you’re going to likely incur a bunch of other problems that are more expensive than whatever game. And not to call out specific companies because they’re not the only ones doing it. So I think it’s really important that this is a broad issue. But like Klarna got rid of a bunch of their customer success staff, and then a year later hired the team back because they realized that the technology couldn’t do what they somehow decided it could do without any proof. So I think there’s that. And then I think there’s also just the Yeah, the reality that this tool may or may not return the kind of returns that we’re thinking about. And so I don’t think we should be going all in on something that’s so untested because right now like entire markets are responding to like PR talking points written by people who have an incentive for you to believe that and don’t really have any accountability structures to tell the truth.

00:37:23.30 [Moe Kiss]: I think one of the things that I’ve been chatting to a few friends about who own small businesses and whatnot is a lot of the pressure that’s on them is that investors or clients are basically expecting the returns from AI to reduce prices or increase revenue streams, but it’s not actually performing at that level yet. Some of the smaller businesses are really in a crunch position because they’ve got these clients who are like, well, I expect that you’re going to charge me less. But it’s not actually providing that kind of value to our business yet. Now you’re just asking. That’s a really difficult position to be in.

00:38:02.47 [Aubrey Blanche]: Yeah, I mean, you know, perhaps a little bit more radical philosophy than like the average listener of this pod is on. But like, yes, so in general, there’s a ton of academic discussion about the fact that like the logic of AI as it is currently being built and deployed is like extractive and capitalistic in that it is inherently being used to devalue labor and expertise. I cannot remember who said it, so I feel really bad about this, but AI is kind of at a point right now where it can write a really good facsimile of a PhD level paper, but you wouldn’t trust it to make decisions about your kids. And so I think that Again, we just need to be a bit more deliberative about this. I think we are a bit as a society drunk on the marketing hype and we’re not making principal decisions. I think there’s also a question in that with a small business. So I’m thinking like professional services, right? Like a consulting business. It’s like, oh, well that took you less time to do. And it’s like, okay, so you are assuming that the cost to you is based on the time it took me to execute that as opposed to the quality of the work, which speaks to an underlying belief about how we value expertise and labor, which doesn’t make sense. The story that I think illustrates this, well, and I don’t know if it’s actually real, but it’s like floating on the internet, so Pablo Picasso is sitting at a bar, and some dude six months later realizes he’s Pablo Picasso. and says, oh my God, could you draw me a thing and doodles on a napkin? And he says, that’ll be $30,000. And the guy says, but that took you five seconds. He said, it took me 30 years to be able to do that in five seconds.

00:39:48.45 [Moe Kiss]: Oh, I love that analogy.

00:39:52.40 [Aubrey Blanche]: And so I think that part of getting away from that is actually equipping small businesses to explain the source of the value that they’re providing to a client. And then also recognizing that some clients just only care about the bottom line and that sucks. But I think we need to equip them to say, but also move to more fixed fee. project structures. So there are ways to structure your pricing that can deal with that in a way that avoids those conversations. But again, I don’t know who is enabling SMEs who are already stretched them to figure out how to cope with that. That’s definitely something I worry about is corporate consolidation, noting that in Australia in particular, I saw a stat that something like over 99% of businesses in Australia are SMEs. like the corporates we’re talking about actually make up a vanishingly small amount of the overall economic ecosystem here. So yeah, just something to think about. Interesting.

00:40:54.44 [Val Kroll]: So is the measurement piece, because it feels to me like analysts are uniquely poised to measure cause and effect. And so if this could be one of the other areas that we could have a little rallying cry to the analytics community to say, hey, if you’re going to fire the CS team, a customer success team at Klarna, we’ll pick on them again for this example. Let’s, oh, I don’t know, think about what we intend that to achieve and let’s measure that. And if it doesn’t, then let’s figure out what the next plan is or whatever. But is that like another area where you think analysts could jump in and step up to help with understanding how this is impacting organizations versus just like, oh, there’s 10 less people here now. So that must be better.

00:41:42.51 [Aubrey Blanche]: Right. So I would say yes. And I would go a click deeper. There’s something that analysts can bring to this that folks outside of the field might not, which is it’s not just what could go wrong. But what is the leading indicator that would tell us it is? And what does our data infrastructure allow us to measure? So that, to me, is the really exciting thing, is that an analyst, because they’re deep in the systems, they understand the data, they’re actually able to translate this idea of harm as a theoretical thing into a set of monitoring procedures that would actually tell you if something’s going wrong. Because the impression I don’t want to get is like everything is terrible all the time. like I operate from a slightly different frame is everything could go wrong all the time. And like, if that is my baseline belief, then I personally am motivated to do things to reduce that risk. And so it’s not meant to be doom and gloom, it’s meant to actually just be responsible to say like, So that’s what I would say is like, okay, define the bad. Like in the case of Klarna, it could be something as simple as like, okay, well, we need to track customer satisfaction with individual interactions or successful resolution of issues, right? It doesn’t need to be like a social justice coded metric. You know, in my head, I’m like, are different customers getting different quality of experiences because they’re having different types of problems, et cetera. But like, we’ll start at a baseline of like, do we see a decline in customer satisfaction? But also the analysts can say, hey, given that we’re not sure about this impact, maybe we just do 10% of the objective for two or three months to actually measure that before we make a decision about whether this is a broader kind of initiative, whether that’s workforce reduction or redeployment or implementing technologies. customer service chatbot as an example. So I think that’s where analysts have a really special and unique role and quite frankly really powerful to lead their organizations to be more responsible. And there’s also a lot of debate about like making the ethical case versus the business case. The reality is they’re both tools and which one works will depend on the organization you’re in. I’ve worked with organizations that go full in on the ethics case and the leaders get upset when you talk about financial returns. And I’ve worked with organizations on the other side that are like, this is about like the board reports every quarter. And I’m like, cool, if I need to explain this to you in money, it’s fine as long as we get to the outcome that we all agree is good, which is you know, creating customer value, which is smooth operations, which is avoiding screwing people over. Like as long as we end up in the same place, the path we take is kind of real.

00:44:25.31 [Moe Kiss]: I think that’s like literally a one-on-one in how data practitioners work, which is always like figuring out what does the person making the decision care about, and then how do you frame your analysis and your recommendation in a way that speaks to the thing they care about.

00:44:39.02 [Aubrey Blanche]: Totally. But you’ve just proved the point that I made earlier, which is that We already have most of the skills to do this well.

00:44:47.38 [Moe Kiss]: Oh, burn. Look at you full circle.

00:44:50.64 [Aubrey Blanche]: Yeah, not to be like, I was right, but I think that’s actually more everybody else is already capable of being right.

00:44:57.13 [Moe Kiss]: So one thing we haven’t talked like, I feel like we haven’t gotten into the actual nitty-gritty, like I could spend another five hours talking to you. But one of the things that we did chat about as we were like preparing for the show was about agent to AI and giving up agency. And I would really love to hear your thoughts about those trade-offs about how people give up agency and what folks are willing to give up in terms of speed. Is the sacrifice worth it? And I feel like we’ve touched on that, but maybe not as deeply, particularly with the agent agai example.

00:45:34.65 [Aubrey Blanche]: Yeah. This is a bit of a conjecture, but I feel strongly about it. But if anyone wants to yell at me in the comments, I’m happy to be proven wrong. I’m thinking about the study that Anthropoc put out called Disempowerment Patterns, and what it showed is that people very often gave up agency to AI. which I find really concerning because there’s other research that shows that the majority of people don’t actually understand how LLMs work. If you don’t know LLMs, they just predict what the next word most likely is. They’re really good at producing things that look like language, but they don’t actually know anything. There’s no conscious, there’s no intention behind it. It’s literally just like, I think that people give up agency because they don’t actually understand the problem that they’re faced with. I think there’s also research that shows that the people who are most likely to give up agency are the least skilled at the thing that AI is doing. So there’s this concept called like, I think it’s AI acceptance, which is like the rate at which someone accepts the output of the AI versus challenges it or changes it. And there’s a strong correlation between the expertise that someone has in the domain and their likelihood to challenge AI. So someone with more expertise rejects AI because they have the ability to evaluate the quality of the output. Whereas if you’re not an expert, you actually don’t have the underlying knowledge to understand whether the output is valid or not. And so you tend to defer to the model. Contrary to what’s happening, the ideal behavior is that you only use AI in places you have the expertise to evaluate the output. But that’s not what happens.

00:47:19.77 [Moe Kiss]: The total example that’s coming to mind is using AI for data analysis, right? Because I absolutely will go back and forth. But like you said, I probably have a stronger threshold of AI acceptance because I’m an expert in that area. So I know when something’s not right or something’s off or I have that intuition and that experience the 30 years built up, maybe not 30, I’m not that old. But I had that experience built up. Whereas for someone else who’s trying to use an LLM to do data analysis, they’re much more likely to accept it on face value. And therefore, the risk increases because they don’t have the expertise.

00:48:00.62 [Aubrey Blanche]: Oh, I never knew that’s what that was called. Yeah. And you think about the idea that even some basic practices that you would practice in data science or kind of analytics is that you run, let’s say, I don’t know, the last time I programmed and did analysis, it was in R. So I don’t know how out of date that is. I’m still, I’m probably starting like I’m coming from the 1800s. But like, You run your code, you still do some cursory checks to make sure nothing weird or unexpected has gone on. So that goes back to my point that like data folks have the skills to deal with this, which is like, I never trust an AI output. So I’d use it for research and editing and all these things. But I’m always asking AI to produce links. I always go read the original source of anything that’s being analyzed or presented to me with an LLM. but it speeds up my acquisition of stuff on a certain topic so I can spend more time analyzing and less time searching. So that’s like an example where I’m expert enough to know that LLMs bullshit me. And so I always have just a dot of skepticism that what I’m reading is true. And so again, that’s an attitude that you can build, which is trust is earned. And I have not seen evidence that this technology is deserving of our full trust. But again, I really think teaching AI literacy has to become a basic skill that’s taught in primary schools. I think it was in Oakland. This is a bit of a tangent, but I promise I’ll come back. There was a focus around public health in Oakland, and so they did a really interesting community-based thing where they found that teeth brushing was really highly correlated with a bunch of other positive health outcomes. I don’t know the science behind it, but clean mouth, much better body function. And so they actually made it so that they ran these community programs or became normalized for community members to teach each other about three facts about teeth brushing that were shown to promote better brushing behavior. And I think that’s actually what we need. We need to think about AI literacy as a public health problem. and to say like there was a baseline of competence that we want everyone to have. And I think to your earlier point because of how fast the technology is moving, my personal belief is that organizations have a higher ethical responsibility to teach their employees safety behaviors because the reality is the government can and does not move fast enough to achieve those things on a scale that we want. And I don’t think it has to be like, you don’t need to spend $250,000 on responsible AI training. literally run it for free where you’re like, here are the three AI behaviors that we encourage. One, like always check out puts. Two, be careful with sensitive and confidential information. Don’t put it in tools that aren’t locked out. Like you can teach people that in 10 minutes and reinforce it over time. Again, corporate leaders have the skills to pull off setting expectations for their business. This is not something that’s outside their realm of the capability of anyone who’s getting paid to lead an organization.

00:51:15.04 [Val Kroll]: I like that. So much to think about.

00:51:20.76 [Aubrey Blanche]: But I think it goes back to, like, you talked about personal responsibility. I think we all have a responsibility. And what that responsibility is changes depending on the power we have access to, the systems we have access to, the work that we’re doing. But I hope that’s an empowering message, which means that you can do a lot. Because think about it can be really easy to go, oh, this is all big and structural and scary and whatever. But imagine if each of us did one slightly more responsible thing every week. That’s actually fundamental systems change, and it doesn’t actually require enormous sacrifices and changes on behalf of any one person, but that takes us getting a collective lens on what it means to achieve safety and responsibility with this tech.

00:52:06.68 [Moe Kiss]: I have a very weird one that has… I have not fully processed this, and so I’m just going to talk about it out loud because I want to get your thoughts because that’s what I use the podcast for. Okay. One of the things that An amazing one on the team, Jennifer, was talking to me about planning and how important planning is. Basically, the analogy she gave me is like, Moe, we need to know we’re going from Melbourne to Sydney. We don’t need to know that we’re catching a plane, a bus, or driving a car, but we need to know we’re going from Melbourne to Sydney. I was like, that is excellent. That’s a framework I’ve used now for how we think about planning. because we might have a path, I promise, I’ll come back. But we have a probable way we’re going to get there, but it might change as we learn new things. I was trying to think about this in the context of AI product development the other week. What was bubbling up in my mind is, Maybe it’s that we don’t know that we’re going from Melbourne to Sydney but we know we want to go from Melbourne to the beach. We just don’t know which beach we want to go to and so what might be different about that process is we need to also figure out how we’re going to get there and then we need to figure out which beach. But then I think the bit that’s been rolling around in my mind is, am I treating AI product development as different to other product development because I’m giving this, what’s the word, uncertainty to it that maybe doesn’t exist? I’m curious, Aubrey, you’ve said a couple of times about AI just being a stack of other technologies and we’ve seen this all before. It’s nothing actually that transformative and a lot of it is hype. I guess I’m just processing live. Am I thinking about it with the hype layer on and actually we’re just going from Melbourne to Sydney or is it slightly different and we do need to have that different mindset?

00:54:06.41 [Aubrey Blanche]: So I think it is slightly different. Like I think most of the things we think about kind of standard software development apply like basic, you know, don’t put your code on the internet unless you’re intentionally open sourcing, etc. Like those principles. But the reality is like traditional software development for the most part, software does what you tell it to do. The unique risk posed by AI is AI sometimes does stuff you didn’t tell it to do. not always. And so the way to deal with that uncertainty is, and what I see happening is some people go, Oh, well, it’s uncertain. So we can’t do anything through our hands up. And like, I think that’s quite silly. We can say, okay, I know that there is a degree of uncertainty and any risk professional will tell you that uncertainty is a fact of the universe. And there are actually very good ways to manage it. And from my perspective, one of the things I’m often telling companies that are saying, you know, I know that I want to go to the beach, but I’m not sure which beach. I’m going to, is to say, but there are certain things you can do to prepare. So for example, you need a mode of transportation. You need to know the rules about how to operate that mode of transportation safely. Do you want to take a bus and get a ticket? Do you need to know how fast you can legally go in New South Wales without getting a ticket on your license? So there’s like things that are knowable that you can plan for and you should do that. But then you also have to operate with an understanding of something will go wrong. and I may or may not detect it with AI. And so the question is, great, what path will I find out that thing has gone wrong? And how do I plan to respond to something unknown going wrong? So like an example from corporate, like corporates have crisis communications frameworks. They don’t know what’s gonna blow up, but they know who they’re going to call when that thing does blow up. what the roles and responsibilities are to responding to that thing. So again, Moe, I think you’re actually right. I think we’re just, we need to borrow from more fields of expertise to manage these things. But a lot of the skills and frameworks that are needed to manage them are not things that need to be invented.

00:56:15.31 [Moe Kiss]: Oh, I love this. But the thing that, okay, the thing that I took away from what you just said is like, If we apply this intentionality as well, it might also help us figure out a better path to get there that minimizes the risk. So like we might figure out taking a bus will mitigate certain risks that driving a car won’t. And so therefore that’s the, oh, okay. I love an analogy.

00:56:40.53 [Aubrey Blanche]: I love how well you played with it too. That was, I really liked your beach analogy. I was like, you just come up and seem cold, but. I don’t know if I want to go to that one. But yeah, I think that’s it. And again, there’s this like underlying thing that happens with people who have expertise in one field. And I would say I tend to see it manifest among certain demographics more than others. Take that how you want. Look at my social media and you’ll figure it out. But people tend to think that because you have expertise in one domain that you therefore make good expert level judgments in other domains. And I think in tech, As an industry, we have so deified engineers to be like, oh, they’re amazing. And let me be clear, engineering is hard. It’s amazing that people can do that. I stand behind that. But because you are amazing at building technology does not necessarily mean that you are qualified to evaluate and manage ethical risk or operational risk. It’s that different people have different expertise, and we need to recognize that the people building often have not been trained or exposed to the types of problems they would need to be able to make expert level decisions about these things, which goes back to my point about committees, which I know is really exciting. But my point is that I do not believe that safety and goodness can be achieved without getting a lot of the right bits of expertise in the room. And someone’s going to tell me they’re going to like, go on chat GPT and like program a suite of agents to function that way. And I’m not really sure if I believe that that’s sufficient. One for the particular reason that like, you know, we’re talking about novel problems and extrapolating outside of samples is a problem every data person knows well. So that’s what I would say is like we need to be really careful and part of it is the underlying value of expertise that’s non-technical.

00:58:33.98 [Moe Kiss]: That feels like an incredible place to wrap, which is impossible because I swear I could sit here all day and just keep chatting about this. But the last thing we do on the show is we go around the horn and we share what’s called a last call. And something that might be of interest to our listeners, not our users, our listeners. Just something you’ve read, you’ve watched that’s interest you. We will, of course, share all the links to Aubrey’s amazing reading list in the show notes. But Aubrey, do you want to go first since you’re our guest?

00:59:04.06 [Aubrey Blanche]: Oh yeah, I’m like fully alone, so if anyone does anything, just like drop everything and watch Heated Rivalry. When it’s good, like come for the hot guys, stay for all of like the completely renorming of like queer media. but also get in on the discourse online. The ethics and the quality and the values that the people who are engaged in creating this show are exhibiting is, I think, transformational in terms of the way media comes into the world and what it does. One, it’s just really fun, but if you’re into more critical analysis and things like that, it’s this rich well of things to think about. For me, ultimately, the way we could do things differently and better.

00:59:46.54 [Moe Kiss]: I love it. Nice.

00:59:47.30 [Val Kroll]: I love that. What about you, Val? This is a medium article. I subscribe to a lot of engineering and product and design content to get the diversity of perspective, not just all analytical content. I actually clicked on this one. I started reading it thinking I was going to hate it. I thought this was rage bait for the analytics crew, but I ended up really liking it. So it’s called, I don’t care what you build and neither should you by Joel Dickinson. And he’s talking a lot about like he has quotes about like, you know, Ronnie Kohavi saying that, you know, only 10 to 30% of experiments or product features actually add any impact. So like, why should we care? And like, who cares about the target? And I’m like, like clutching my pearls. But then he starts talking about the framework that he thinks works. And he was saying, I found that good leadership in engineering boils down to asking relentlessly, how will we know? So not what you will build or what technology we’ll use, don’t show me the architecture, just how will we know if the problem is solved? And I was like, okay, you got me. I love that whole thinking about the problems frameworks and things like that. Anyways, definitely not from an analyst perspective, but I really enjoyed it. It was a good read. It was a fun one.

01:01:02.13 [Moe Kiss]: I have a bit of a weird one today. Normally, it’s something I’ve read or looked at, but this time, I’m going to crowdsource some help. I’ve been thinking a lot about a measurement of AI products. And obviously there is like a wealth of information, but I think the angle particularly that I’m thinking about is as it relates to like user engagement and users like having a successful experience and how that potentially differs from like an AI product versus like a more traditional product and like what that intersectionality is. So I’m not like talking about like evaluating a model, I’m more about like How do we understand if a user has multiple designs that are generated from an AI output? Does that count as someone doing something creative or is that like, I’m just like really trying to wrestle with some of these concepts and I don’t have a firm view yet. So it’s more of a shout out that if folks are coming across interesting articles or perspectives on this, I would love you to share them with me because it’s definitely top of mind, especially like, when you have like AI products and non-AI products in your stack and really wanting to be able to paint a holistic picture. So anyway, that’s just, I thought I’d share my conundrum at the moment. I like it. Okay, so this has been such a wonderful conversation. Epically huge thank you, Aubrey. Like, just love having you on the show. We’re so appreciative. Oh my gosh.

01:02:35.92 [Aubrey Blanche]: Literally call me anytime. I’ll move my calendar to show up. Right. I’m sorry, my diary. My diary. I’m trying to assembly here.

01:02:43.51 [Moe Kiss]: Nice. So what we would love after the show is for you to please come and leave us a review or a rating on your listening app of choice and feel free also to request a sticker on the analyticshour.io link. You can also reach out to us on LinkedIn and the measure Slack, and also through our email contact at analyticshour.io. So that’s a wrap on AI the teenager. A very big thank you once again. And for all of my co-hosts, who today is just Val, keep analyzing.

01:03:23.06 [Moe Kiss]: Thanks for listening. Let’s keep the conversation going with your comments, suggestions, and questions on Twitter at @analyticshour on the web at analyticshour.io, our LinkedIn group, and the Measure Chat Slack group. Music for the podcast by Josh Crowhurst.

01:03:41.02 [Charles Barkley]: Those smart guys wanted to fit in, so they made up a term called analytics. Analytics don’t work. Do the analytics say go for it, no matter who’s going for it? So if you and I were on the field, the analytics say go for it. It’s the stupidest, laziest, lamest thing I’ve ever heard for reasoning in competition.

01:04:08.49 [Val Kroll]: Rock flag and let’s get intentional.