#297: Durable Wisdom in an Age of AI Slop

What do colors, soup kitchens, and mountain climbing have in common? They’re all part of the mental models that have shaped how we think about analytics, and they’re exactly the kind of durable wisdom that matters more than ever in an age of AI slop. This campfire-style conversation among the co-hosts reveals the concepts, books, and aha moments that have stuck with us across decades of analytics work. From the magic of randomization to the critical distinction between outputs and outcomes, we share the frameworks that guide our thinking whether we’re writing SQL by hand or asking Claude to do it for us. It turns out the most valuable analytics wisdom isn’t about tools or techniques—it’s about understanding how humans actually make decisions, build trust, and collaborate effectively. Some things never go out of style.

This episode is brought to you by Prism from Ask-Y—your agentic analytics platform for automating analytics, exploring data, creating repeatable workflows, and delivering accurate insights—all without the need for manual query writing.

Links to Resources Mentioned in the Show

- (Shiny App) The Magic of Randomization Illustrated with Color

- Outputs vs. Outcomes: this is a good resource/explanation of the core idea, but Val also addressed the concept in this Medium post, and Tim digs into it in Chapter 5 of his book (available in print, ebook, and audiobook formats!)

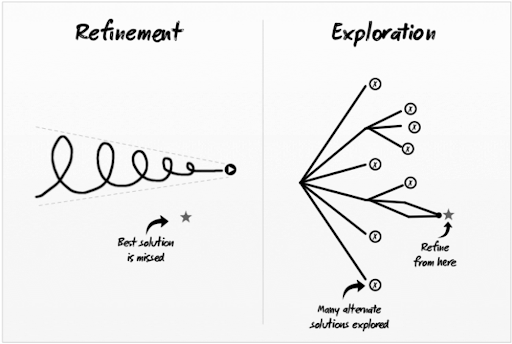

- (Book) A/B Testing: The Most Powerful Way to Turn Clicks Into Customers by Dan Siroker and Pete Koomen. And the diagram from that book that hit on local vs. global maxima through the lens of “Refinement” vs. “Exploration”

- (Article) Addition bias (Ingvar Kamprad / IKEA)

- (Book) Information Dashboard Design: Displaying Data for At-a-Glance Monitoring by Stephen Few

- (Book) Storytelling with Data: A Data Visualization Guide for Business Professionals by Cole Nussbaumer Knaflic

- (Article) Chasing Statistical Ghosts in Experimentation

- (Book) Field Experiments: Design, Analysis, and Interpretation by Alan S. Gerber and Donald P. Green

- (Book) First, Break All the Rules: What the World’s Greatest Managers Do Differently by Marcus Buckingham and the Gallup Organization

- (Book) Switch: How to Change Things When Change Is Hard by Chip Heath and Dan Heath

- (Podcast) Choiceology with Katy Milkman

- (Book) The Science of Storytelling: Why Stories Make Us Human and How to Tell Them Better by Will Storr

- (Podcast Episode) #240: Asking Better Questions with Taylor Buonocore Guthrie

Photo by Joris Voeten on Unsplash

Episode Transcript

00:00:00.00 [Announcer]: Welcome to the Analytics Power Hour.

00:00:08.92 [Announcer]: Analytics topics covered conversationally and sometimes with explicit language.

00:00:15.80 [Michael Helbling]: Hi everyone, welcome. It’s the Analytics Power Hour, and this is episode 297. You know, at this point, I think we’ve all been hit at some kind of output that is obviously AI generated. I mean, even it has its own term, AI slop. Oddly enough, most people don’t appreciate being hit at AI output without it being thought through by a real person, and it got us thinking. It’s not that what AI produces is always bad, but there is starting to be different categories of content based on whether AI produced it or not. And maybe there is something to be said for analytics wisdom that existed way before AI, and that will keep being true no matter how AI is involved out into the future. So we reach back to the formative moments of our own careers to share some of the hard one wisdom from our careers in analytics. Maybe this episode will lack a little AI, but I think it’ll still be worthwhile. So let’s introduce the people who make up the show. Hey, Val Crowell.

00:01:16.92 [Julie Hoyer]: Hey, Michael.

00:01:18.12 [Michael Helbling]: I’m so glad you’re here. And we’ve got Tim Wilson. Howdy, howdy. And I still put up with you somehow, so that’s good.

00:01:28.52 [Julie Hoyer]: Okay.

00:01:29.52 [Michael Helbling]: I had Moee kiss. How you going?

00:01:33.04 [Moe Kiss]: How you going? Oh, I love it.

00:01:35.04 [Michael Helbling]: Thanks. Yep. And Julie Hoyer.

00:01:38.04 [Julie Hoyer]: Welcome.

00:01:39.04 [Michael Helbling]: Hey there. And I’m Michael Helbling. So, yeah, we’ve got the whole team together. We’ve all seen so much in our careers pre-AI. And so what’s some wisdom that exists that’s going to be useful, whether AI is involved or not? Who wants to kick us off with something they’ve learned over their career?

00:01:55.12 [Julie Hoyer]: Oh, I will.

00:01:56.64 [Julie Hoyer]: Because this one, I feel like sticks with me still to this day.

00:02:01.24 [Julie Hoyer]: And this was more than over a year ago. This was three, four years ago. But it was actually back when me and Tim were working together. And I’ll never forget, we were talking about randomization and trying to figure out the best way to represent it, different ways to think about it. I think this was right around Tim when we were trying to do a talk around blocking, randomization with blocking and things.

00:02:29.84 [Julie Hoyer]: And so we could not figure out how to make it friendly to people, people who had maybe

00:02:35.88 [Julie Hoyer]: not thought about it as in-depth as we were at that moment. We were way too in the weeds. And I remember slacking Tim like the next morning, like right at the beginning of work and saying… So I had a thought.

00:02:49.68 [Tim Wilson]: Wait, it was the next morning because it was a glass of wine sitting on your couch, thought, I believe.

00:02:55.24 [Julie Hoyer]: Yes. Yeah, I was like, maybe I even slacked you that night and was like, look at this in the morning.

00:02:59.40 [Julie Hoyer]: But I was like, I just was having a glass of wine and it came to me.

00:03:02.76 [Julie Hoyer]: I was like, I feel like a good way to explain randomization would be through colors. And so I tell them how, what if each color represented a characteristic and when you randomize the population, you could see how the colors were split between test and variation and what if those colors were blended to show a group color. And then you could see that the color comes out really close because it’s randomized across the characterization. And so Tim, I nearly couldn’t resist and that’s why I told him. So I was like, that’s about as far as I can take it. You made a whole shiny app to let you choose the size of your sample, to choose how many characteristics, to choose the predictiveness of those characteristics on your outcome, the mean, all these things. And then you could actually run a simulation and see the colors blended. And then you could flip on and off blocking. And there have been multiple times, even earlier this year, when I’m thinking about certain things with randomization, I’ll still go back to that shiny app and play with it. And it helps me a lot with talk tracks or simplifying it or reminding myself of different characteristics of it. So that’s one of my favorites.

00:04:18.32 [Tim Wilson]: I like that one too because we got a little carried away because it was like, well, this work, I think it was built very quickly. And then Julie was like, what about if we, you think we could do this? What if we did a, and I was like, what if we did, and so it got a little involved.

00:04:33.96 [Julie Hoyer]: My hands never touched the keyboard. That was the best part. I got to the whole thing.

00:04:39.96 [Moe Kiss]: It was like voice control. Why do you think it’s stuck with you so much as like the antithesis of like the AI flop world?

00:04:49.56 [Julie Hoyer]: Like what was the, I don’t know, the bit that just made it really resonate or it keeps you

00:04:55.92 [Moe Kiss]: coming back.

00:04:56.92 [Julie Hoyer]: I think it’s the visualization of it. I don’t know why the, the color is what stuck with me. And sometimes like a good visual is so much easier for me to go back to. And I think the AI part, this is, I swear I’m going to loop back to your answer. But I even remember back in college starting in engineering, they just wanted to give you like an output to use. They’d say, just use this output, use this output, like a formula. And I, and I’m, no, I’m not alone this way. I always needed to understand why. So that if I forgot the perfect formula, I could like reason my way back to my understanding. And there’s something about that shiny app and the visualization of a color that just like, dang, it like hit something in my brain that when things get fuzzy and I haven’t talked about those things in a while, I haven’t thought about those things in depth. Like it kind of brings back some of that understanding. Like I can have a good starting point and like think through it again. And the AI part is like, again, they just give you output and that output is actually like the variation of an AI, you know, agent or whatever, like giving you something. It’s never exactly the same. So I like that it is steady and a starting point for me.

00:06:10.24 [Tim Wilson]: I love that. The reason I got so excited, I think it is this color piece because I will chime in that when we talk about random assignment and kind of the power of random assignment and we’d say, oh, so if you have, you know, a thousand people and you randomly split them, you’re going to have a roughly the same number of men and women in each group and roughly the same number of household income and like that idea that you’re making two groups that are effectively the same, like it’s just, it’s abstracted when you talk about all the characteristics of what’s in it. And I think when thought about it, like, I mean, it literally is kind of a color mixer that it just sort of generates a palette and then you see the average color and it may be like this lime green and it’s slightly different shades, but it’s like a simple math thing. So to me, like it just goes into my head of saying, this is what’s happening while we’re making two groups that are pretty damn close to the same. But the fact that, I mean, that was to me kind of the really useful part of that is I was trying to grapple with how to actually internalize this. And then it was very easy to say, okay, now that extrapolates to these other more nebulous characteristics of psychographic details and demographic details.

00:07:27.20 [Moe Kiss]: But it sounds like it’s solving the problem of, to quote a Canva value, making complex things simple, right? Like it’s about understandability. It’s about a way to see something so that it like clicks in someone’s brain. And it’s funny, I had someone in my team that was doing some work the other day and I went through it afterwards. So like we had a couple of different strategies and I was kind of like, we need to go through these strategies. We really need to make sure that they’re being based on the data points that we have available. And like that easily is a task, for example, that probably my instinct would have been,

00:08:04.36 [Julie Hoyer]: I’ll put it in AI and see what’s like, here are the data points, like what’s missing.

00:08:09.24 [Moe Kiss]: And this person went through it so rigorously. And the reason that the work like came back and I was like, oh, I get this, this is high quality work. And like I can really understand it is because it’s also that falling, it made it click in my brain of just like, not here are the differences, but here’s the assumption I made about why I think this is different. And here is the like, the leap that’s been made here. And I think it’s probably because of this. And I think it’s, I don’t know, when I just keep coming back to this point about like quality, it’s like when you can see the quality and it, someone finds a way to put it into a narrative that then clicks in your brain. Like that’s where I feel like the gold is right now. And it doesn’t feel like there’s a lot of it.

00:08:55.32 [Tim Wilson]: That does kind of make me want to go with one of mine because I’m seeing a direct link from that, which is also kind of just a concept or something that I find myself thinking about and talking about with clients and business partners a lot. And even with analysts is the distinction between outcomes and outputs and how we, as a, we tend to pick metrics that are outputs when we really care about our outcomes. And I traced this back 20 years at this point when I was on a United Way committee in Austin. And there was this retired social worker who we were, we were reviewing programs for funding. And so we had a lot of proposals coming in and we were in these series of committee meetings and we’d all have to like read, I don’t know, 10 proposals and then we’d meet about them. And he kept having this kind of consistent bit of feedback on multiple programs where he would say, well, these are like, you always have to say how you’re going to measure the program. And he would say, oh, well, these are, these are like output metrics and we really want outcomes. And I was 10 years into my analytics career at that point. And I was like, what are you talking about? And it slowly, he started to explain and the example, he had various ones because he’d point to them in specific programs. So the one that I come back to was talking about like food pantries and how they like, they would count like the number of meals served or like a soup kitchen number of meals served. And he was like, yeah, they can just like count the number of trays that go through the line. And that’s the number of meals served. And we know that’s good. You’re serving meals. He’s like, but really what we’re trying to do is reduce food insecurity. We’re trying to keep people from going hungry, which is related. You want your outputs to lead to an outcome. But he was like, we really want to push them to say, can they get more to an outcome oriented measure? And I’ve taken that over the course of that work, I was like, this is like profound for what I’m doing in my day to day with my colleagues at work and trying to get them to think about, this is why a click doesn’t matter. This is why the click through rate, I mean, doesn’t not matter. It’s just those are outputs and trying to guide discussions early on into outcome oriented metrics and business outcomes and then going from there and say, how close can we get to measuring that? And unfortunately, I think the guy’s name was, I am 99% sure, unless I’ve been fooling myself for years. His name was Pat Craig. He was a retired social worker. I have every four or five years, I go try to find him because I come back to that again and again and again. But it was another one that made it very tangible because it was really in the real world. People who are in need talking about outputs versus outcomes really solidified it, made it very tangible, but it then applies in the more, really, we’re not curing cancer here, we’re talking about marketing stuff, but the concept still applies.

00:12:00.60 [Julie Hoyer]: That’s a good one. I love that one. I use it all the time. Do you know how many people I’ve said that thinking, oh, they’ll know about this? And they write it down, they’re like, oh, that’s good. So I’m like, oh, thanks, Tim.

00:12:11.16 [Tim Wilson]: Well, I mean, there are times where I feel silly asking, I’m like, do we, are we, I’m sure some of you are familiar with this because I’ve thought about it for so long. To me, it’s one of those like when the light bulb goes on, you can’t stop seeing it.

00:12:29.08 [Moe Kiss]: Tim, the light bulb has just gone on for me. Just to be clear, I messaged you. It’s like a message because I was like, maybe I shouldn’t derail the whole show. But I think I’m thinking about it as like input and

00:12:43.16 [Julie Hoyer]: output. Yeah, but here we go. But anyways, or we could. No, but I’ve been thinking about it as like

00:12:50.76 [Moe Kiss]: input and output metrics. And I say, like in my mind, an output metric was an outcome. But like, I just feel like that framing is so much better. And it truly is clicking in my brain for the first time. And I’m, I’m sure I’ve heard you say this before, but sometimes like someone just said something a slightly different way, or your mind is at the right time to absorb it. Anyway, thank you. This is going to be very helpful. You’re welcome.

00:13:15.80 [Tim Wilson]: And I’ll also say this makes it sound like it’s binary. The older I get, the more I’m like, it’s not, I say like, you want to be skewed towards outcomes. You can have debates about, you know, is a monthly active user, is that an output or an outcome? And it’s kind of, it’s context dependent. And it’s not, but if you can ground it in that overall, overall pure version, I found it very,

00:13:38.60 [Val Kroll]: very good. You’re just getting soft, Tim. It’s binary. There’s no gray.

00:13:43.16 [Tim Wilson]: That’s right. You said the rules have reversed. I never ever seriously about, wow, Tim, you’re just mellowing out waiting for that to happen.

00:13:54.60 [Julie Hoyer]: So chill. Hey, Tim, have you tried out vibe analyzing yet?

00:14:03.96 [Tim Wilson]: Oh, that phrase hurts me deeply. But I did download my Strava archive to just try to like analyze my workouts. And it turned out to be like 28 different CSVs with everywhere from like two to 103 columns each. Oh, sounds messy. What’d you do? Well, I mean, for giggles, I tried Prism by Ask Why. That’s ask-the-letter why. I uploaded like all 28 files and just started asking questions through their chat interface in plain English. Started off by asking like, how many miles do I run each month? And it worked? I mean, it did. It initially it gave me results pretty quickly. It was, it was like really fast. It actually wasn’t, wasn’t perfect, but that really wasn’t a prism issue. It turned out that Strava data just refers to distance. Like that’s what the column is labeled and that distance is in kilometers. So it took a few iterations, still a little bit of the human analyst to say, wait a minute, those, I wish I was running that far, took a few iterations with the platform to get that figured out. But once we did it, it handled that conversion, not only on that query, but like automatically going forward with future queries. Oh, that’s actually pretty cool.

00:15:21.56 [Michael Helbling]: It’s just like how we want to handle mishmashes of different sources and medium names in a consistent way whenever we’re looking at working with like digital data. Exactly. And I also got to

00:15:32.52 [Tim Wilson]: kind of check out their Mingus query language, which it’s like a more readable form of SQL with the actual SQL just like one click away. And I even like built some quick visuals and some quick reports. It’s also got like a local mode for keeping all the data on your machine. I actually haven’t tried that out yet. I just did the cloud version, but it’s pretty nice feature.

00:15:54.20 [Michael Helbling]: Nice. So yeah, it sounds like something worth checking out. You can head over to ask-y.ai, join the prison beta waitlist and use the promo code APH when you sign up, and that’ll move you up to the top of the list. We can guarantee that you’ll get access faster than Tim finishes his next 10k probably. All right, back to the show. Okay, so I want to share one of mine.

00:16:23.56 [Val Kroll]: And I’ve worked with most of you and if you’re listening, I’ve worked with you as a co-worker, as my client, you will know this one of mine. In the experimentation realm and world, one of the concepts that lots of programs like to think about or keep in the back of their mind is the difference between a local and global maxima. And like the tried and true, you know, the analogy or the visual is like you climb to the top of the mountain. And now that you’re through those clouds, you see that there’s actually a secondary peak to climb. And I get that, that works. But there’s this visual that actually came from the book A.B. Testing. It was the optimizely book at the time, Dan Saroker, the CEO and Pete Kuhlman, who led their statistical arm and function. In there, there’s this visual that has, and I’ll try my best to describe it. There’s two sides

00:17:20.44 [Tim Wilson]: that are being compared. Hold it up longer. That could wind up as a YouTube short, you know, so this will drive people to the YouTube channel. Don’t you want to click through the full episode

00:17:30.68 [Val Kroll]: now? So on the left hand side, they’re describing refinement and that there’s kind of like this cone shape where like the squiggly is getting closer and closer to the star. But there’s actually a star to the side of it. And it says that that was the best solution and it was missed because they were kind of refining to this point. Whereas exploration is kind of this point that branches out and has like lots of arms to it. And so they did find the optimal solution. And the arrow is saying like, here’s where you refine from. And what I like about this image so much more than the local versus global maxima is because it gives you the visual of the consequence of not thinking big first or to not think about exploration or innovative type of thinking or testing first and going straight into like, how do we refine the micro copy on this page? And it’s like, well, was that the right page to send someone to in the first place? And so it’s about like staying curious at like a higher level because it’s not just, you know, like we’ll find these like little wins as we go, which is great, but they’re empty calories if you’re kind of missing the optimal solution. And so that visual, I bet you could find it, I have pasted that in no fewer than 50 presentations in my life. I’m quite confident because I think it’s just a really nice way to like cement the point of that concept home.

00:18:55.00 [Moe Kiss]: Well, it’s going in one of my presentations. It’s amazing.

00:19:00.28 [Val Kroll]: I like it. It’s good.

00:19:02.12 [Tim Wilson]: But is this is part of this that like that just the realities of corporate life is that it is much easier to get into is to be in the lane that you’re in and kind of refine like, oh, because to do the exploration feels riskier and it often means you’re kind of reaching more broadly with ideas. So like just like business culture drives us to say, let’s do little tweaks and refinement and and it like organize like how do organizations do organizations see this and say, you’re right, we need to push ourselves to think more broadly, take bigger or broader or more exploratory swings.

00:19:54.04 [Val Kroll]: Yeah, I totally, I mean, how many times have you been like, we’ll give a marketing context like, well, when we ran this campaign last year, we only had two versions this creative. So this year we’re going to have three. And so it’s like the smaller like, you know, I’m obviously being reductive in that example, but it’s like, based on what we did last year, we all remember that here’s one new thing we’re going to do different. And that’s like the optimization or like the refinement versus like, instead of just going direct to patients, what if we had a strategy for healthcare providers? And so like that would be the bigger swing. But to your point, Tim, I couldn’t agree more because it’s like, there’s no incentive for that because that’s more work, you know, more approvals, perhaps, you know, more budget overhead, things like that. And so I think people who are really excited about the outcomes of what that’s trying to do, and go back to yours, that those are the folks who really kind of like thrive in finding those. And you’ll notice that those are the people that lots of other people really like to work with inside of organizations, I will say, because they’re doing more exciting things in service of, you know, shared goals for the organization. But yeah, I think it’s not, it’s not the natural path, I agree. No, people want what they can control. Like in their in their lane, like you

00:21:11.64 [Julie Hoyer]: were saying, it’s hard to like look up and do that broader view, and then have to collaborate. But I think then what you said, Val, tying it back to outcomes, like, if people realize the shared outcomes, they were more focused on driving instead of their individual lane outputs, like maybe people would be more open to doing that instead of just the refinement.

00:21:30.68 [Moe Kiss]: Yeah, I was just gonna say, I don’t think the shared outcomes are always incentivized, like sometimes they are and sometimes they aren’t. But I think the one kind of the one push I would have on this framework as like, and I am a huge fan, I’m definitely definitely going to be borrowing this. I think there’s an assumption that you’ll always get to the star, like the point of refinement through exploration, and sometimes you don’t. Sometimes you explore, you do all the extra work, and it doesn’t add a significant amount of value. And I think, generally, those cases are pretty rare. But I think it does happen sometimes. They’re like, again, not binary.

00:22:10.44 [Tim Wilson]: Without any specifics, obviously. But I think of Canva as a company, like on a product level, very exploratory, like every time you turn around, they’re like, oh, yeah. And now, you know, it can make your hopes for you in the morning. So it seems like, I mean, like literally, I mean, it feels like, I sort of get updates through conversations we’re having. You’re like, well, yeah, can we get to them? Like, okay, there’s three other products that, what the hell?

00:22:40.92 [Julie Hoyer]: So it feels like that. Have there been, has Canva had like, pursuit of like specific,

00:22:48.20 [Tim Wilson]: like this is a whole new area that has gone nowhere and been shut off? Again, not asking for

00:22:55.40 [Moe Kiss]: any specifics? Yeah, I think so. I think the tension right now, though, is that we all need to lean more towards that exploration piece because of just the pace of AI products and features and how they’re shipping. Like, I think what particularly is a trap right now is if you’re in that retirement, we want to go towards this goal, we want to build this thing. Like, in today’s climate, that’s more dangerous than ever, I would say, because just the way things are changing

00:23:26.28 [Julie Hoyer]: so quickly. So I would say not necessarily like things getting completely like abandoned,

00:23:33.72 [Moe Kiss]: but more things getting refined and changed along the way.

00:23:37.96 [Tim Wilson]: I like that with AI. If you’re, if you do the kind of the MVP in multiple directions in a way that you can say, I’d rather try five wildly different things with AI in a minimal way with

00:23:53.08 [Julie Hoyer]: clarity on how I’m going to determine whether this is the best bet or not, than determine

00:24:00.52 [Tim Wilson]: we’re going to make the best chat experience using the latest LLMs ever and just like pursue that

00:24:08.28 [Michael Helbling]: and miss it. Well, I’ll share one that’s important to me. I don’t remember who first told me this, but it was about five years into my analytics career and somebody said to me, Michael, trust is hard to build and easy to break. And I think that’s more of a general statement, but applied to the world of analytics, I watched in my own career sort of people who believed when I presented an analysis and people who didn’t and losing trust with stakeholders was something I definitely experienced in the early years of my career and how much that put me in a position where I could no longer influence the business or business outcomes in certain areas. And so it really kind of hit home and I really kind of held onto that for, for the rest of my career was just sort of thinking about how do I continue to build trust when I’m working with business stakeholders when I’m talking about things. And I like, because it’s me, I don’t have any kind of format, formal like structure to that, but there are little signifiers I look for around like how do I know that trust is still there. And I use that to kind of guide how I, how I act around, you know, today my clients or stakeholders I’m working with of sort of like how is that sort of relationship which tells me then the influence I have as an analyst for that particular situation. So that one is one that has kind of always stuck with me because I love being influential. And for early in my career, I just figured if I showed you the data, it doesn’t matter who the messenger was, you would just say, okay, yeah, that’s the data and you would accept it. But the reality is, is the messenger matters quite a bit. And since I’m not Tim Wilson, you know, I had to

00:26:03.16 [Julie Hoyer]: like, you know, ramp up my skills. But this, this is like, I mean, honestly, like,

00:26:10.20 [Tim Wilson]: dear listener, if you’ll like that one, go back and listen to our last episode with Eric Friedman, because a lot of what comes up with that is that I think we think it’s, that means the data has to be perfect and the analysis has to be perfect. And I feel like what you model and what we talked about with him, a lot of it is like actually showing that you understand the environment they’re working, like you understand building trust has a lot more of the soft skill than my data is always perfect. Yeah, there’s that part of it, understanding

00:26:44.68 [Michael Helbling]: the context. And I think also not to use a bad word to you, Tim, but empathy has a lot to do with it

00:26:51.64 [Val Kroll]: as well. Don’t know what that does not compute. Yeah. No, because it’s like one of the signals

00:27:04.44 [Michael Helbling]: in like, if I’m working with other clients is if they come to me with a separate problem, that tells me I’m building trust because they are like, okay, yeah, you’re doing the project or whatever project. But if they come and say, Hey, here’s another thing that’s going on, you have any insider thoughts into this? That’s a great example to me of like, okay, we’re on a trust path together now. So that’s awesome. Let’s keep building that. So like, that’s, but yeah, you’re right. You’re absolutely right. It’s not just the data or the analysis. It’s also the context to show you understand show that you care about what they care about. And it’s challenging because as analysts, I feel like sometimes we want to not necessarily be front and center. And the reality is to influence decision making, you’ve got to be, you’ve got to be willing to kind of plant your foot and sort of be the face of the

00:27:54.04 [Moe Kiss]: data in a way. So the funny thing is, Michael, like you, you’ve been chatting about trust, and it actually makes me think, I don’t know, I know I’m obsessed with her big fan girl, but she talks about this so much. And like, she calls it work slow, which is enabled through AI. And I think like the way she articulates it is just so brilliant around like, and I feel like I’m seeing so much of this where like AI removes friction for shitty ideas, right? And so everyone just like, and I get it, I get it because I’m doing it too. Like I do, you can move faster, but it’s making us look like we’re productive, but actually we’re just producing more shitty ideas, right? And I think the bit that’s really challenging then is like being able to differentiate between the shitty ideas and the not shitty ideas, right? And so I think the thing that she really is like honing it in on, which has just been like flying through my mind. And it’s the same as the trust of the stakeholders, right? Is like AI can be this incredible tool to unlock a lot. But like, how do we really use it? How do we incentivize the quality over the velocity? Because at the moment, we’re really honing in on velocity, which is breaking that trust so deeply. And I think about it so much like, as a manager, every time you get a piece of work, as a person who’s producing work that is lower quality, like, I personally feel like you’re

00:29:22.28 [Julie Hoyer]: fragmenting trust with those around you, right? Well, but it’s, it’s, it’s got dual pressures,

00:29:27.24 [Tim Wilson]: you’ve got the pressures to use AI, that’s coming down from on high, use it, use it, use it, be efficient. When you’re delivering stuff and it’s polished and longer and grammatically correct and no typos and coherent and organized thoughts, but the person who’s getting it knows and then you’re under the gun to say, I can’t spend as much time whittling it down. Like they are competing pressures. The person who’s receiving it, I think you’re dead on, like it does, like you’re sending me like total AI slap, stupid sales pitches, but there was no trust there in the first place. It does feel like he gets sneakier when it’s with a coworker saying, oh, I, you know, here, here are the notes from the meeting. And you read through it and you’re like, this isn’t your voice and it’s a little off, but I can’t really criticize you because you did it quickly. But I also don’t feel like there’s a depth of thought. Like that’s a, but that’s the thing, right? Like I almost wish

00:30:25.32 [Moe Kiss]: there was a way to know if I have 10 docs in my pile from my reading list, which one wasn’t written by AI, because that’s the one I’m going to go and read, but there’s no way to know that, right? So then what ends up happening? Like I feel like we need to create a system that incentivizes the quality of thought and the depth and the fact that if you want something to be shorter or tighter, like you actually need, like you can’t just give it to AI. And I just, I feel like we’re

00:30:50.44 [Tim Wilson]: in this real conundrum. That can be a great idea. I’m going to, I’m going to vibe code an app to do that this weekend. That has collars. Yeah. Yeah.

00:31:01.08 [Michael Helbling]: Part of this, the thing about maintaining trust in, in this context, I think is about transparency as well. So like if you use AI, we’ll just lead with, Hey, this is AI generated. So just F, you know, so you know, or you say most of this is AI generated, but here’s my synthesis up top. So that way you can let people know the distinction. Like you don’t have to go read all this. You can, you know, it’s the same thing when you’re preparing an analysis and like you want to show all the cool things you did to the data, but you put it in the appendix because your stakeholders don’t care. They just want to know what the McKinsey title is and the, and the, the big insight. And if they trust you, they’re probably don’t need to dig much further. If they want to learn more or have more deeper interest, there’s sourcing material behind it. So a lot of times AI for me feels, feels like that where it’s like, okay, AI can pump out tons of content and, and honestly, beautiful content too. Like it’ll make a better slide than I make on average. A lot of times, you know, if I just sort of take content and plug it in, I don’t make the best slides. Okay.

00:32:07.16 [Moe Kiss]: Like I’m just being honest. I had AI make a slide deck for me the other day and it’s still the

00:32:13.72 [Michael Helbling]: work to do on the design side, I would say. No, no, no, I, I mostly don’t let it because even though it can make a pretty slide, it’s not, it doesn’t fit what I’m trying to do. So like, no, I haven’t yet been able to like really position a whole AI slide deck yet, but it’s

00:32:30.44 [Tim Wilson]: getting closer. Imagine, imagine if you just could just go on a walk with the dog and just talk, talk to the AI and then it’ll generate a deck for you. And it’s like, no, there’s, there’s value in the friction of me needing to go on the walk with the dog, think about it, stew over it, and then come down with what are the three things that I want to say.

00:32:50.44 [Moe Kiss]: Okay. I promise after this, I will get off my soapbox. I promise. But there’s an incredible, I mean, we all know I love the acquired podcast, but there’s one on IKEA, which is amazing. And Ingvar, I’m proud, I’m going to fuck up his name, I always do, but Ingvar is the guy who started IKEA, right? And he has, he had this principle, which I’ve just been thinking about, like, how do you implement this and how do you scale it? Basically, particularly as like people manager, right? Like we have addition bias. So like any time thing seem hard or tricky, we try and add to it. We try and put more on a more process, more structure, more things. And his kind of like management rule was always simplified. So if we have a problem, what’s one thing we can take away, what’s one thing we can remove. And I’m, I’m trying to like really think about that with the team, like, how do we take stuff away instead of adding? And so is it about like, and again, like my, my bias is the addition bias, I’m straight away like, okay, let’s have an experiment template, like, let’s have measurement like standards, and I’m like, I’m adding, how do I take away so that we simplify? Because especially with AI, there is a lot of things where we’re adding, we’re constantly adding, we’re adding metrics, we’re adding, like extra reports, extra things, and it’s adding to the complexity, which is not the intent that we think we’re going to have. All right. Okay. I’m

00:34:12.92 [Michael Helbling]: off the third box. I’m done. No, I like that one. It goes back to what you said before, Moe, which was sort of like, execution is going to zero. So the quality of the idea or the quality of thinking now matters more than ever, because you can go execute on a poor idea so fast, but waste everybody’s time in the process. And so like, having some thoughtfulness ahead of getting everybody rolling

00:34:40.36 [Tim Wilson]: now is sort of like even more critical. I love the language of addition bias, because I think that it is so broadly applicable. And I’m going to like, throw a quick one in, and then we’ll go back to maybe more broadly, but, and it’s a twofer, but because to me, maximizing the data pixel ratio from a data visualization and a clarity of communication, which I’ve been shouting from the rooftops for years. So information dashboard design by Steven Few, I read that like in 2006, Cole Naflik, it’s chapter three of her book is like, basically declutter the storytelling with data data visualization guide for business professionals. But that that’s in a narrow, that’s the addition bias of how do I provide, I’m going to deliver this to a stakeholder, my instinct to build more trust is to put more stuff in it. And what they really want is to remove stuff. And with AI, like with AI, when you ask it, summarize this, if you ask it to give you a two minute script, it will give you a four minute script. If you ask it, like you have to constantly tell it to do less. So it goes for processes, data visualizations, the analysis you do, let’s keep digging deeper, deeper, deeper, deeper. And it’s like, or can we stop

00:36:01.16 [Julie Hoyer]: and make a decision to move on? But it makes me think about, I was thinking about this initially, and now you saying that it makes me really want to try it. And I feel like I’ve done a little bit in passing, but when AI gives you the four minute thing, the huge long summary, I feel like a really good check on the AI is to actually ask it to give you like a four sentence summary. Because I feel like that’s where you can sniff out the BS faster. Like when I’ve asked it for a short summary, I know it’s totally missed the plot than like what I would quickly give as a four, some like four point summary or four sentence summary. Because sometimes it is like you start reading, you’re like, I guess it sounds good. Yeah, kind of. And then I think you’re more apt to just like trust it and maybe use that long format. But it’s like the old adage, sorry, it took me so long to like write you a short letter or something. I’m quoting it a little off, but it feels like that. So I do wonder, could you like stress test the AI output sometimes by asking it for the short thing and be like, ooh, really quickly, good or bad?

00:37:02.44 [Moe Kiss]: I do, I definitely do. But then I end up editing it. And I’m like, I should have just written

00:37:07.08 [Julie Hoyer]: it myself, it would have been faster. Always, always. And we thought we were going to talk about AI

00:37:12.20 [Michael Helbling]: in this episode. Yeah, well, it’s, but it is wise because it in a lot of ways in this current where we are right now with AI, it’s AI is an analytical contributor is very much in the look what I can do kind of phase. And, and it’s sort of like when you think about like coaching a junior teammate, if you want to think about AI like that, it’s sort of the same kind of thing is like, all right, strip all that out. You don’t have to say all that, you’re going way too far, you’re trying to impress because you have cool, you know, it’s like, don’t try to blind them with science, just get in, get out, say what’s important, you know, yeah. So that’s almost sort of how I feel about it. Because it’s like, yeah, it’s trying to do way too much. It’s like, look at the cool stuff I can do. And it’s like, mom, look, look at me, look at me. Like, yes, you’re very smart,

00:38:07.40 [Julie Hoyer]: shut up. Very good.

00:38:17.88 [Val Kroll]: Well, I can go with another one that’s not continuing on this like beautiful thread that we’ve been weaving. We’ll find a link, we’ll make it, we’ll ask AI to make it.

00:38:28.76 [Tim Wilson]: Wait, Tim, did you do your two books, though? Because I had cut it off.

00:38:31.96 [Julie Hoyer]: No, my two four was just the two books. The two books.

00:38:34.84 [Tim Wilson]: Colin Affleck and Steven Fugh. So yeah.

00:38:37.40 [Val Kroll]: Another experimentation heavy one, but another one that I have sent a link to these articles, maybe more than anything else. It was actually a medium post, it was a collection of medium posts, I should say, that was on Towards Data Science. And it was written by the Skyscanner engineering team. And it’s the overarching kind of umbrella title of content is Chasing Statistical Ghosts and Experimentation. And not only does it like break down some of the common like myths, but it’s the things that people still struggle to like fully understand why it’s not effective to run on it. So this is actually very similar to Julie’s first one in that it does produce a lot of visuals, although they are not interactive, to kind of illustrate exactly what the issues are with people having these like mental models. The one that I’ve sent the most, and there’s like four in the series, I believe, is the first ghost, which is it’s either significance or noise. And there’s like one quote in there, like towards the middle, and it’s about like, experiments don’t work towards significance and comparing relative significance of p values outside of the thresholds is a mistake that will lead to a lot of false positives. But the number of times and I wish that there was like a button I could press and it would like zap people in their chairs, if they said like, well, we’re trending towards significance. No, but it’s so well put, they like break it down into like its smallest little pieces, and kind of like build it all back up together. So anyways, it’s just every every part of this was so well done. But yeah, definitely kind of come back to this one quite a few times.

00:40:28.76 [Julie Hoyer]: Yeah, Val, you you turn me on to those and those are amazing. Great, like, ground yourself be like, I’m getting lost in the sauce, like let me go read my articles again for a second.

00:40:42.60 [Tim Wilson]: That’s awesome. It’s a good series. I feel like Julie, there’s a natural add on to that around

00:40:48.20 [Julie Hoyer]: experimentation that maybe you could yeah, I think I know which one you’re talking about.

00:40:56.52 [Julie Hoyer]: My well-thumbed through book with tons and tons of notes in the margin that I’ve actually read just the first five chapters about three times, I’ve done two book clubs on it. Field experiments, very simple, nice straightforward name. Really great book though, this was actually recommended to me and Tim when we worked with Joe, he was leaving at the time search discovery and we were running a randomized controlled trial with a client and it was put on me to, you know, continue it, do the analysis and run the next one and I was like, holy shit, like, what am I supposed to do? And Joe just sends us a link, he’s like, it’s fine, buy this book, read the first three chapters, you know, you guys will be good. Julie, you got this and you did. Yeah, it was

00:41:46.52 [Tim Wilson]: a little scary. I read it and I was like, I was like, wow, ooh, Julie, you got this.

00:41:52.44 [Julie Hoyer]: Yeah. Honestly, thank God.

00:41:55.80 [Tim Wilson]: You know Joe’s other one’s number, so give it a go.

00:41:57.80 [Julie Hoyer]: Yeah, thank God, he still could contact Joe. Also, thank God I was a math major and could read mathematical equations, but it is a really good book. Like for a dense book, a very informative book, again, that’s one that just set such a good, clear foundation. Like the way they write about these complex theories of running these statistical tests, randomized control trials, like again, like the magic of randomization. I mean, that shiny app I talked about at the beginning came from the same phase of life as reading this book. And it was so good and I still

00:42:36.60 [Tim Wilson]: go back. I remember that. I remember hitting that. That was like, yeah, my favorite phrase now.

00:42:41.96 [Julie Hoyer]: Yeah, really, if you need like a crash course in the foundations of randomized control trials, I really do highly recommend that book. Even the first five chapters, Joe said three. I found I needed to go at least to five. I think there’s 10 chapters total. Really good.

00:43:00.36 [Val Kroll]: As a member, former member of one of the book clubs that you ran for that book. You remember, I think I was in your second one. That was one of the densest books. It’s not a quick read, but it’s like the pieces of valuable nuggets per word is probably the highest density of any book. I’m like, oh, there was like two paragraphs and there was like three light bulbs that went off. So, and really good examples in that book too. So yeah, I like that in stories.

00:43:29.40 [Moe Kiss]: Good ones. Tim, you’ve got to talk about that. There’s one you’ve got to talk about. I’m dying

00:43:34.68 [Tim Wilson]: to hear it. Was it possibly first break all the rules? Okay. This is one where is Michael accused

00:43:44.28 [Moe Kiss]: me of not having empathy. I was surprised to see this on a list with your name. So first off, Tim,

00:43:52.60 [Michael Helbling]: it’s not an accusation. It’s just an observation.

00:44:02.76 [Tim Wilson]: Just do your fucking job. Yeah. So first break all the rules, what the world’s greatest managers do differently by Marcus Buckingham. And there were a couple of editions and other people wrote with them. And it’s Strengths Finder is what gets like all the play. And I’m not a fan of Strengths Finder, which is tied to now discover your strengths. But it was to a two book pair. First break all the rules, now discover your strengths. And I read it. It was like required reading early 2000s for managers at the company I was at. And to this day, it gave me a lot of confidence when I started working with people who just weren’t the right fit for the job they were in and getting comfortable with this idea of like skills versus talents. And you can you can teach skills, you can’t teach talents. And that doesn’t mean you can’t raise people up to get better. But if somebody is just like not good at data visualization or not good at building trust or not good at client communication or whatever it is, you can give them training and we have this tendency to say, well, that’s their deficit. That’s their deficient in that area. Let’s spend as much time as we can coaching them and training to to bring them up. And when you start to recognize that it’s like, no, that’s just not them. That doesn’t energize them. The best you can do is get them to a base level of performance. That was like one big aha from the book. The other one that like really blew my mind because the way they write about it is like so true is that our tendency, if we’re managing a team is to spend the most time with our low performers because it’s like the idea that the lowest performer is what the team is going to be judged as. And they’re the people who need to be raised up. The rock stars, you’re like, they got this. So we’ll just dump more and more stuff on them. And the book makes a really, really strong case for saying you’re you’re shooting yourself in the foot, you should be spending, you should be super charging the rock stars. And you need to support the lower performers, but it’s not really your job to try to make a square peg fit an around hole. Your job is to see if there is a role you can get them into, where they will thrive and become rock stars, but trying to coach and train when they’re just not a fit. I mean, that’s literally I read that 20 years ago. And it’s one that I multiple times, I will see it, I will be interacting with somebody, I will give honestly, even though I am kind of a jackass, like I go in with a presumption of good intentions and good capabilities. And I’ve, especially with analysts, I have gotten more and more confident over the years of somebody who’s just not, when you start redeeming their backstories, it also often was somebody who is really struggling. And they like the idea of punching buttons from the data and getting glorious insights and just have zero intuition or any of the compulsions that analysts do that make them good analysts. And I’ve got like names in my head of like, they’re never going to thrive in this. And the best thing that can happen for them is to find their way into another area. So yeah, that’s weird. That’s like a management book. But I find myself coming back to it.

00:47:36.04 [Michael Helbling]: And would you say, Tim, this has been more applicable in your work life or your podcast life?

00:47:44.52 [Tim Wilson]: Only, only so many roles I can shift people around into and I have a little control.

00:47:50.44 [Michael Helbling]: No, I’m sorry that I made a joke about it. Because actually, I see this in you, Tim, like you doing this and like how it affects how you interact with people. And I think I’ve learned some of these same lessons over the years in managing teams and people of like figuring out how to shape their role to be help them be as effective as they can be based on sort of what they’re what they’re naturally inclined towards versus sort of what you sort of want them to do. And I like that puzzle of figuring out sort of where people fit. It’s, it’s fun. Not always, you’re not always allowed in every environment to to puzzle with it as long as you’d like to. But

00:48:31.24 [Tim Wilson]: it’s the fact is spending more time with your stars is a lot more fun and energizing helps you grow as well. So like that book, giving the permission to say, you don’t feel bad that you’re like collaborating with somebody who is everyone thinks of as being like the star on your team. Like that’s here’s here’s the reasons for why that’s actually the right thing to be doing. Again, not neglecting people. It’s not like it’s a complete yeah, binary. But yeah, it’s sort of in

00:49:02.36 [Michael Helbling]: the same vein. It goes way back. I there was a radio lab episode back before I even listened to podcasts. This is like on the radio station like back in 2010. And they were discussing like how people use emotion and logic and decision making. And they had a specific case of a person who’d lost the function in their brain that allowed them to bring emotion into decision making. And so all their decision making was completely logical only. And as a result, they were actually paralyzed in every decision they would make. And it literally destroyed their life. Because they they couldn’t decide between like, Oh, I need to sign this document, should I use a blue pen or a black pen? And then it would go back and forth from the pros and cons of a blue pen or a black pen to sign and then everything they ever did good grocery store, they couldn’t pick which toothpaste to buy like, and it was really fascinating to get a window into this idea that so often emotion drives decision making more or as much as logic does and sometimes a lot more than logic does in a lot of environments. And that was another big like light switch for me. And around that same time, I was reading a book called Switch by Chip and Dan Heath, which kind of goes into some similar concepts about how to kind of be influential or make people see what you’re trying to say through different ways of kind of reasoning and and providing good examples of things and stuff. So that was sort of a time where I was sort of like, okay, yeah, how do I get people to like, engage with decision making? And also how do I this all goes back to me trying to figure out how to leverage who I was in the context of analytics, let’s just be honest about it, because like, I’m, I wouldn’t call myself a traditional analyst by any stretch. And so by kind of learning some of these lessons and watching sort of the emotional process of decision making, it gave me really good hooks into, okay, here’s how I can engage with people because I can feel the emotions coming off of people a lot of times and kind of into it, what to do. Whereas my ability to like create amazing analyses like Tim and with perfect visuals and everything is not, I have good skills, but they’re not as good as yours, Tim, let’s be honest. And so as a result, like, you know, I had to fool people into doing what I

00:51:26.36 [Tim Wilson]: wanted them to do. So it’s almost becoming like a trope or like conventional wisdom that people aren’t, they don’t make decisions based on the data and like they make it based on emotions. And then that sometimes that gets used as like weaponized against analytics, like how do you square that in a way that’s healthy because it gets, it gets treated like so throw away like, well, then what the hell are we even doing here? It’s such a balancing act.

00:52:06.36 [Michael Helbling]: I feel like there, people get a hold of this information and do seek to manipulate it. And I feel like that’s, at some point, like that kind of behavior is going to get caught and it’s going to have a limiting function. And so you shouldn’t do that. It’s sort of like if you’re a company

00:52:27.08 [Tim Wilson]: that’s in the public. People being being manipulative and playing to emotions. Yeah, yeah, yeah. Isn’t okay. Gotcha. So that’s like the whole point of traceology, right? And that’s why I love

00:52:37.08 [Moe Kiss]: that show so deeply is because like, each episode is going into one of those biases and how it shows up and the decisions that we’re making. And like, and it’s, I mean, Katie Milken is just so good at explaining what sometimes they’re like, quite technical, scientific studies in such a relatable way. But I just, I don’t know if I see it being weaponized. I’m more, I actually sometimes feel, I see it the other way, which is that I see data people being like, data decisions should totally be based on logic and what the data is saying. And I, I have very much come to this piece of like, intuition matters more. That is a different piece of data that is all your past experiences. And that also is worthy of consideration when you’re making a decision. There are other quantifiable data points that you also include, but like the idea that someone’s only going to make a decision based on those without other factors like intuition, what you’re like, I don’t know, maybe. Is that, is that like when you kind of intuition together?

00:53:40.84 [Tim Wilson]: You should be bringing together like facts & feelings.

00:53:45.32 [Michael Helbling]: Somebody should run with that. Somebody should run with that. But yeah, that was the other word, Moe, that I was going to bring into it as well. Intuition sort of runs this path as well, because people will rely on that more than they will rely on external facts and figures. And like, there’s that famous Jeff Bezos quote of like, oh, if the numbers look different to you than your intuition, then probably trust your intuition, you know, that kind of thing. And, and in reality, it’s true. Like our, our, and in the way our minds work as humans, there is a really good book on storytelling by, I think we’ll store and one of the quotes out of there was basically like, we look to fit patterns. So if the data fits our model, then we’ll be more willing to accept it. And if it doesn’t, or more, more, less likely to onboard it, just because like the way our human minds work, we’re trying to fit things in. And so if it’s sort of like counter to what our intuition or, or what we think is true of the world, but also when you look at even your own decision making process when you have different emotional states. So if you have high cortisol levels and anxiety, your decision making is actually impaired as compared to like, lower levels of anxiety or, or more calm feelings. Like it’s just true. Like you, you make different decisions and those don’t smirk. All of you are laughing and smirking because you know it’s true. It’s just hitting home for me. I’m like, yeah, yeah. And we think about that. So, so think about that for like a CMO who is like literally these next few campaigns literally mean their job. And you were coming in with some analysis that need them to make decisions and they are so high strong. Like how do you even pull some of the action out of the room so they can get to a good place to make good decisions sometimes? It’s so tall. It’s like you end up in a therapy role practically when you’re trying to provide analysis. And it’s, it’s not like you should be doing that, but it’s sort of like a reality of the work environment sometimes. So we don’t always do a good job. But like there’s all this data that, you know, Google did that huge project about sort of how people perform maximally in their jobs. And like when you provide the right amount of psychological safety and those kinds of things like people’s performance improves, like there’s so much data to support all of this stuff. And so the idea that emotion shouldn’t have a place or isn’t involved in decision making, like you really kind of like miss that at your own peril. And it’s not just sort of like, how do I feel about you? It’s like, how is everything around me happening? And it could be nothing to do with you walking in somebody’s office with like an amazing analysis that you’ve really thought through and prepared very well. And the meeting might go super poorly and just has a zoolcho to do with you. It’s because they got screamed at in the meeting before this, and they’re still coming down off of that. And there’s really nothing you can do about it except maybe come back Moenday and talk about it again and hope that they’re in a little better place.

00:56:45.32 [Tim Wilson]: When there’s like a, like another corollary, which is separate from that is like recognizing

00:56:50.92 [Julie Hoyer]: fear and shame, like negative emotions that the goal, like if you can get them emotionally on

00:57:00.28 [Tim Wilson]: as a partnership, it goes to kind of the trust, it goes to all of the pieces of this, any idea that you’re going to walk in and like put up a chart that makes somebody look bad or surprises them in a negative way. You know, even if you’re like, oh, I’d make these four people really happy, but this person, it makes them look like I’m finally calling bullshit on them. And that’s going to be great because my manager and their manager are going to love me because I’m going to help them win this battle. Like that is a short term win. And you just, like much, much

00:57:32.76 [Michael Helbling]: better going to be gunning for you every single time from here on out. Even if they’re a great

00:57:37.88 [Julie Hoyer]: person who doesn’t want like much, much better to have them along on the journey so that they too

00:57:45.16 [Tim Wilson]: are vested in like, it’s like asking the question like, what would you need to see in order to make this decision? Or what would you like to bring them on the journey where they are, I don’t know if that doesn’t go, that’s trying to play to the emotions, marrying it with the data to say, let’s think about how would we feel if we saw that? How would we feel if we saw that take a little bit of the sting out of the, what does this mean for my self-worth and my professional career and make it more about, hey, we’re doing something good for the company. Wow. Damn, that’s me. I’m sorry. That’s too much lip service to soft skills. I love it. I mean, maybe what we’re

00:58:27.08 [Michael Helbling]: finding out, Tim, is like, all this stuff really matters in a world of AI. There’s not a word I’ve

00:58:32.44 [Tim Wilson]: said that I haven’t just got straight from Claude. Like, I was just like, and now they’re talking about emotions. What should I say? It actually bugs me. Oh, go ahead. Well, no, go ahead, Michael.

00:58:44.84 [Michael Helbling]: No, it’s a sort of a tangent, but like lately, Anthropic has been releasing sort of these things about how Claude has like these emotions that it expresses. And it really rose me the wrong way that they even frame it like that, because I’m like, you can’t be teaching people to like emotionally support in AI. Like, it’s not a good way to deal with LLMs in my view. But again, you know, whatever, what do I know?

00:59:15.80 [Val Kroll]: Well, you made me think of another quick one, making sure someone’s ready to hear a message, especially if it’s a difficult one, episode 240, Taylor Bonacore Guthrie. The questions, that’s one that I definitely reach back for a lot. On the scale of one to 10, where, you know, one is a dumpster fire, 10 is the best day of your life, where are you? And so if they say, four, because I just got my ass chewed by, well, you’re like, well, we’re gonna save me for Moenday. And we’re gonna go ahead and come back. Need a couple more days. But yeah, no, that’s a, that was, I learned a lot from, from that episode from Taylor and definitely have returned to that one. So that, that’s another one I should have put on there for

01:00:01.56 [Michael Helbling]: this episode. That’s a good one. All right, we do have to start to wrap up. This has been a really fun conversation. I think all of you, there’s been some really amazing insights. So trying to empathetically put myself in the position of a listener, I think we had some good stuff there. So hopefully it’s helpful. You know, we’ll, we’ll wait and check the

01:00:24.84 [Tim Wilson]: median, median listen length and let the data tell us whether this was good or not.

01:00:29.40 [Michael Helbling]: Yeah, that’s right. The algorithm will tell us. Anyway, as you’ve been listening, I bet you have things that you found in your career that are super helpful and guide you even in an AI kind of environment. We’d love to hear from you. So yeah, please reach out to us. The best way to do that, you can do that through our website. You can also do that on Measure Slack or on LinkedIn, or via email at contact at analyticshour.io. And as you listen to the show, leave us a rating or review on whatever platform you use us on. We’d love to hear those. We’d love to see them. It helps us out. And if you love to rock a sticker on your laptop or your phone case or something like that, we’ve got analytics power hour stickers and you can order those as well on our website at analyticshour.io. So yeah, thanks again, everybody. Moe, Julie, Tim, Val, this is really nice. Yeah, I’m feeling really nice. I feel good. All right, this kind of felt like a hug. Yeah, I feel more prepared to face the future. And I think no matter what the future holds, I think I speak for all my co-hosts when I say

01:01:41.88 [Announcer]: keep analyzing. Thanks for listening. Let’s keep the conversation going with your comments, suggestions and questions on Twitter at at analyticshour, on the web at analyticshour.io, our LinkedIn group, and the Measure Chat Slack group. Music for the podcast by Josh Crohurst. Those smart guys wanted to fit in. So they made up a term called analytics. Analytics don’t work.

01:02:08.84 [Julie Hoyer]: Do the analytics say go for it no matter who’s going for it? So if you and I were on the field, the analytics say go for it. It’s the stupidest, laziest, lamest thing I’ve ever heard for reasoning

01:02:20.28 [Julie Hoyer]: in competition. Okay, one of my fallback ones I have to say to that point, Michael, I was trying to remember, I always like reference this one idea. I was like, I knew I listened to it on a podcast and I was trying so hard to remember what it was. It was from this podcast. So I put it in there as a fallback, but it was this podcast that I heard. I mean, please do bring that one up.

01:02:43.80 [Julie Hoyer]: I was like, that’s maybe a little awkward, but I’ll throw it in there just in case.

01:02:51.56 [Michael Helbling]: I would even like that episode very much. So I’m glad you got something good. Yeah, I didn’t mean it either.

01:03:00.68 [Julie Hoyer]: Which is funny because, yeah, we don’t have to talk about it now. We don’t know. We can talk about it. It’s fine.

01:03:07.40 [Tim Wilson]: That’s East like Denison. Oh, okay. Yeah, that sounds really tough. Yeah.

01:03:12.92 [Michael Helbling]: Shut up, Val. Columbus is not that big, but 45 minute commute in Columbus. That’s a big deal.

01:03:19.56 [Val Kroll]: No, you said South side of Columbus. It just sounded like… Oh, that’s not you. You come from Chicago.

01:03:27.72 [Michael Helbling]: No, South side of Chicago, yeah, has a rep.

01:03:32.36 [Julie Hoyer]: See, I feel like I should be drinking a glass of wine to tell that one. Do I? Okay. Parameters here before I go off and don’t do the format of the show, right? Also, I feel like I’ve picked up a slight Southern twang from being in Mississippi for two days. So if you hear it… Oh, this really is a… I’m not actually background.

01:03:53.48 [Tim Wilson]: Dude, you become vaguely racist and also misogynistic. Hey, easy. I’m not trying to defend Mississippi.

01:04:02.20 [Julie Hoyer]: But do we want to give the background of where it came from or how you first…

01:04:07.56 [Julie Hoyer]: Yeah, of course. …came across it and then how you used it? Is that kind of the format or am I misogynistic?

01:04:12.92 [Moe Kiss]: Just go with the vibe.

01:04:15.08 [Julie Hoyer]: But I’m not saying the vibe. And my freaking comment.

01:04:18.12 [Michael Helbling]: Hold on. No, you’re not freaking everyone out. Just testing my distance from the microphone.

01:04:21.64 [Julie Hoyer]: Do I need… Is my volume okay? Your volume is fine. That’s my hot mic. Your volume’s great. And you don’t have to be quiet.

01:04:27.96 [Julie Hoyer]: Yeah, I’m sorry.

01:04:30.36 [Julie Hoyer]: True. See, that’s the thing. Like, I tend to try to be quiet because my mic was so bad.

01:04:33.88 [Val Kroll]: I can tell by the way you move your mouth that you’re trying to be quieter. But also, I’m weirdly observant about things like that. No pressure.

01:04:45.00 [Moe Kiss]: Fuck my life. One second. I’m so sorry.

01:04:47.40 [Michael Helbling]: All right, perfect. I didn’t want to start right now. Okay, wait.

01:04:50.52 [Julie Hoyer]: Do we get to tell the story about Moee and her saying, fuck my life in our last episode? Oh, it’s in the outtakes.

01:04:56.28 [Tim Wilson]: It is totally in the outtakes. Oh, my God.

01:04:57.96 [Julie Hoyer]: Wait.

01:04:58.12 [Tim Wilson]: So good.

01:04:59.72 [Julie Hoyer]: Oh, that’s Moee’s tagline.

01:05:04.44 [Tim Wilson]: Yeah, Moee had to like, as we were losing co-hosts sporadically for various crises, and Moee left and thought that we would have finished wrapping up by then. So she just came back in with the hot fuck my life. And we just went with it. In the middle of the wrap up. Can I finish the wrap up now?

01:05:26.04 [Julie Hoyer]: And Moee was just going in the back. He’s like, can I finish wrapping?

01:05:29.72 [Julie Hoyer]: And she was like, oh, my God. I was like, yes.

01:05:33.88 [Moe Kiss]: I think I actually said fuck my life again.

01:05:36.28 [Michael Helbling]: Yeah, you did. You did. Yeah.

01:05:38.60 [Moe Kiss]: Oh, jeez. Anyway. Okay, good to go. It was great.

01:05:40.52 [Michael Helbling]: All right.

01:05:41.56 [Tim Wilson]: Here we go. All right. We’ll get started in five. I’d like to say right now, Tony, this is going to be the perfect. There will be what you went through on the last one. This one is smooth as silk. Rock flag and do your fucking job.